Deep Learning

The advancement of machine and deep learning methods in traffic sign detection is critical for improving road safety and developing intelligent transportation systems. However, the scarcity of a comprehensive and publicly available dataset on Indian traffic has been a significant challenge for researchers in this field. To reduce this gap, we introduced the Indian Road Traffic Sign Detection dataset (IRTSD-Datasetv1), which captures real-world images across diverse conditions.

- Categories:

924 Views

924 Views

The objective of this study is to conduct a systematic examination of research trends and hotspots in the domain of autonomous vehicles leveraging deep learning, through a bibliometric analysis. By scrutinizing research publications from various countries spanning 2017 to 2023, this paper aims to summarize effective research methodologies and identify potential innovative pathways to foster further advancements in AVs research. A total of 1,239 publications from the core collection of scientific networks were retrieved and utilized to construct a clustering network.

- Categories:

116 Views

116 Views

The training trajectory datasets are collected from real users when exploring the volume dataset on our interactive 3D visualization framework. The format of the training dataset collected is trajectories of POVs in the Cartesian space. Multiple volume datasets with distinct spatial features and transfer functions are used to collect comprehensive training datasets of trajectories. The initial point is randomly selected for each user. Collected training trajectories are cleaned by removing POV outliers due to users' misoperations to improve uniformity.

- Categories:

89 Views

89 Views

In deep learning, images are utilized due to their rich information content, spatial hierarchies, and translation invariance, rendering them ideal for tasks such as object recognition and classification. The classification of malware using images is an important field for deep learning, especially in cybersecurity. Within this context, the Classified Advanced Persistent Threat Dataset is a thorough collection that has been carefully selected to further this field's study and innovation.

- Categories:

1528 Views

1528 Views

The COronaVIrus Disease of 2019 (COVID19) pandemic poses a significant global challenge, with millions

affected and millions of lives lost. This study introduces a privacy conscious approach for early detection of COVID19,

employing breathing sounds and chest X-ray images. Leveraging Blockchain and optimized neural networks, proposed

method ensures data security and accuracy. The chest X-ray images undergo preprocessing, segmentation and feature

- Categories:

123 Views

123 Views

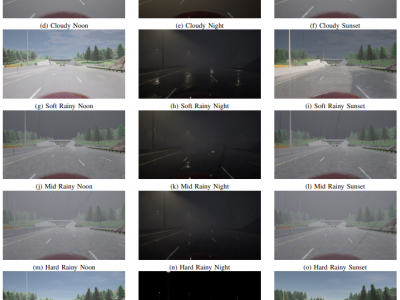

The JKU-ITS AVDM contains data from 17 participants performing different tasks with various levels of distraction.

The data collection was carried out in accordance with the relevant guidelines and regulations and informed consent was obtained from all participants.

The dataset was collected using the JKU-ITS research vehicle with automated capabilities under different illumination and weather conditions along a secure test route within the

- Categories:

651 Views

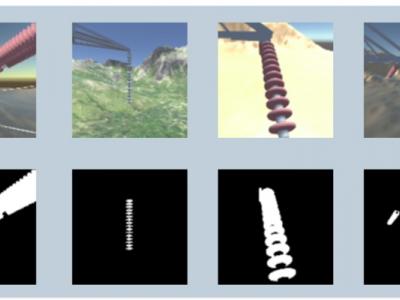

651 ViewsThis database contains Synthetic High-Voltage Power Line Insulator Images.

There are two sets of images: one for image segmentation and another for image classification.

The first set contains images with different types of materials and landscapes, including the following landscape types: Mountains, Forest, Desert, City, Stream, Plantation. Each of the above-mentioned landscape types consists of 2,627 images per insulator type, which can be Ceramic, Polymeric or made of Glass, with a total of 47,286 distinct images.

- Categories:

437 Views

437 ViewsThe "Thaat and Raga Forest (TRF) Dataset" represents a significant advancement in computational musicology, focusing specifically on Indian Classical Music (ICM). While Western music has seen substantial attention in this field, ICM remains relatively underexplored. This manuscript presents the utilization of Deep Learning models to analyze ICM, with a primary focus on identifying Thaats and Ragas within musical compositions. Thaats and Ragas identification holds pivotal importance for various applications, including sentiment-based recommendation systems and music categorization.

- Categories:

661 Views

661 ViewsSafety of the Intended Functionality (SOTIF) addresses sensor performance limitations and deep learning-based object detection insufficiencies to ensure the intended functionality of Automated Driving Systems (ADS). This paper presents a methodology examining the adaptability and performance evaluation of the 3D object detection methods on a LiDAR point cloud dataset generated by simulating a SOTIF-related Use Case.

- Categories:

74 Views

74 Views

3D datasets used in Toward-ground-truth optical coherence tomography. Guangming Ni et al., "Toward ground-truth optical coherence tomography via three-dimensional unsupervised deep learning processing and data", 2023 There are two dataset: OCT-R1 and OCT-R2. OCT-R1 contains three-dimensional (3D) data collected from 41 human eyes using a BM-400K BMizar (Topi Ltd.) OCT scanner at Sichuan Provincial People's Hospital. To enhance the diversity of the data, we performed scans over two different ranges.

- Categories:

232 Views

232 Views