Signal Processing

Our dataset consists of a pretraining dataset and a fine-tuning dataset. The pretraining dataset is generated using the FDTD method. We simulated a scenario for underground pipeline detection, where the transmitter (tx) is located above the ground, and the receiver (rx) is approximately 30 cm away from the transmitter. The target pipeline buried underground has depths ranging from 1 to 3 meters and a length of approximately 10 meters.

- Categories:

88 Views

88 ViewsThis dataset is recorded by 26 subjects in Shandong Provincial Hospital using wearable ECG devices. It totally includes 208 segments with a duration of 30 seconds. The sampling rate is 256Hz. All the data format is ‘.mat’. This dataset can be used for signal quality assessment as the unacceptable category. All the data are recorded in free-living conditions with various noises. This dataset is recorded by 26 subjects in Shandong Provincial Hospital using wearable ECG devices. It totally includes 208 segments with a duration of 30 seconds. The sampling rate is 256Hz.

- Categories:

375 Views

375 ViewsThe Numerical Latin Letters (DNLL) dataset consists of Latin numeric letters organized into 26 distinct letter classes, corresponding to the Latin alphabet. Each class within this dataset encompasses multiple letter forms, resulting in a diverse and extensive collection. These letters vary in color, size, writing style, thickness, background, orientation, luminosity, and other attributes, making the dataset highly comprehensive and rich.

- Categories:

517 Views

517 Views

This dataset presents a collection of real-world RF signals encompassing three prominent wireless communication technologies: Wi-Fi (IEEE 802.11ax), LTE, and 5G. The data aims to facilitate advanced research in spectrum analysis, interference identification, and wireless communication optimization. The signals were meticulously captured under varying conditions to ensure a broad representation of real-world scenarios, including different modulation schemes, channel conditions, and data rates.

- Categories:

3307 Views

3307 Views

Wearable and low power devices are vulnerable to side-channel attacks, which can retrieve private data (like sensitive data or the private key of a cryptographic algorithm) based on externally measured magnitudes, like power consumption. These attacks have a high dependence on the data being encrypted -- the more variable it is, the more information an attacker will have for performing it. This database contains ECG data measured with a wearable sensorized garment during different levels of activity.

- Categories:

376 Views

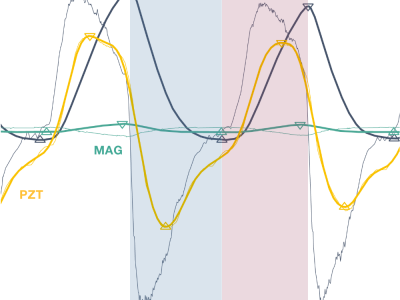

376 ViewsDataset for validation of a new magnetic field-based wearable breathing sensor (MAG), which uses the movement of the chest wall as a surrogate measure of respiratory activity. Based on the principle of variation in magnetic field strength with the distance from the source, this system explores Hall effect sensing, paired with a permanent magnet, embedded in a chest strap.

- Categories:

529 Views

529 ViewsDataset for validation of a new magnetic field-based wearable breathing sensor (MAG), which uses the movement of the chest wall as a surrogate measure of respiratory activity. Based on the principle of variation in magnetic field strength with the distance from the source, this system explores Hall effect sensing, paired with a permanent magnet, embedded in a chest strap.

- Categories:

146 Views

146 ViewsThis LTE_RFFI project sets up an LTE device radio frequency fingerprint identification system using deep learning techniques. The LTE uplink signals are collected from ten different LTE devices using a USRP N210 in different locations. The sampling rate of the USRP is 25 MHz. The received signal is resampled to 30.72 MHz in Matlab. Then, the signals are processed and saved in the MAT file form. More details about the datasets can be found in the README document.

- Categories:

1019 Views

1019 Views

This dataset is for paper "A feature pyramid network based partition map prediction method for efficient encoding in Video-based Point Cloud Compress", and is what the authors use for training the FPN mentioned in the paper. The CU partitioning data in this dataset comes from V-PCC using VVC to make CU partition and extracting data for a sample based on 64×64 CU. Encoder configuration when extracting data as described in Section III-A of the paper, the point cloud source is the first 32 frames of basketball_player order by owlii, including the partitioning of 5 different QPs.

- Categories:

83 Views

83 Views