Computer Vision

The TUROS-TS encompasses 5,357 Google Street View images with 8,775 traffic sign instances covering 9 categories and 28 classes. Three subsets of the dataset were created: test (10%-1050 images 579), validation (20% -1050 images), and training (70% - 3728 images). It is available upon request. If you want to train and test the data set. Please send an email to afef.zwidi@regim.usf.tn

- Categories:

55 Views

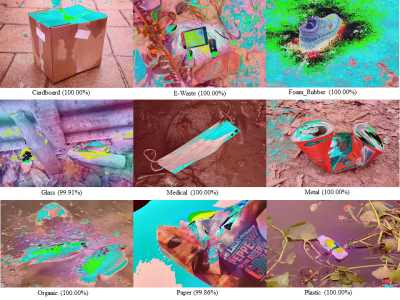

55 ViewsAn automatic waste classification system embedded with higher accuracy and precision of convolution neural network (CNN) model can significantly the reduce manual labor involved in recycling. The ConvNeXt architecture has gained remarkable improvements in image recognition. A larger dataset, called TrashNeXt, comprising 23,625 images across nine categories has been introduced in this study by combining and thoroughly analyzing various pre-existing datasets.

- Categories:

334 Views

334 ViewsThe IARPA Space-Based Machine Automated Recognition Technique (SMART) program was one of the first large-scale research program to advance the state of the art for automatically detecting, characterizing, and monitoring large-scale anthropogenic activity in global scale, multi-source, heterogeneous satellite imagery. The program leveraged and advanced the latest techniques in artificial intelligence (AI), computer vision (CV), and machine learning (ML) applied to geospatial applications.

- Categories:

132 Views

132 ViewsThe Dash Cam Video Dataset is a comprehensive collection of real-world road footage captured across various Indian roads, focusing on lane conditions and traffic dynamics. Indian roads are often characterized by inconsistent lane markings, unstructured traffic flow, and frequent obstructions, making lane detection and traffic identification a challenging task for autonomous vehicle systems.

- Categories:

419 Views

419 Views

OpenGL is a library for doing computer graphics.By using it, we can create interactive applications which

render high-quality color images composed of 3D geometric objects and images. OpenGL is window and

operating system independent. As such, the part of our application which does rendering is platform inde-

pendent.However, in order for OpenGLto be able to render, it needs awindow to draw into. Generally, The

Project OpenGL Ludo-Board Game is a computer graphics project. The computer graphics project used

- Categories:

18 Views

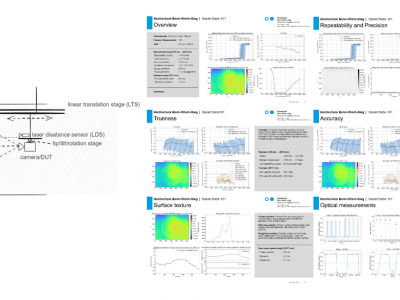

18 ViewsThis dataset accompanies the study “Universal Metrics to Characterize the Performance of Imaging 3D Measurement Systems with a Focus on Static Indoor Scenes” and provides all measurement data, processing scripts, and evaluation code necessary to reproduce the results. It includes raw and processed point cloud data from six state-of-the-art 3D measurement systems, captured under standardized conditions. Additionally, the dataset contains high-speed sensor measurements of the cameras’ active illumination, offering insights into their optical emission characteristics.

- Categories:

139 Views

139 Views

Following the setup of previous works [8, 16], we conducted experiments on various bit image restoration tasks.

We utilized a dataset of 2000 16-bit images, with training

data sourced from SINTEL [37] and FIVE-K [38]. SINTEL

is an animated short film dataset containing over 20,000 16-

bit lossless images with a resolution of 436 × 1024 pixels. In

FIVE-K, randomly select images from 5,000 16-bit natural

images for the experiment.The test set includes 8 images

randomly chosen from the SINTEL dataset (referred to as

- Categories:

27 Views

27 ViewsBinary classification is the most suitable task considering the common use cases in MCUs. Numerous datasets for image classification have been proposed. The Visual Wake Words (VWW) dataset, which is derived from the COCO dataset, distinguishes between ‘w/ person’ and ‘w/o person’ and is designed for object detection on MCUs. Therefore, datasets for binary classification and object detection exist. However, the dataset for binary classification has not been proposed for the semantic segmentation task.

- Categories:

62 Views

62 Views