Geoscience and Remote Sensing

The "CloudPatch-7 Hyperspectral Dataset" comprises a manually curated collection of hyperspectral images, focused on pixel classification of atmospheric cloud classes. This labeled dataset features 380 patches, each a 50x50 pixel grid, derived from 28 larger, unlabeled parent images approximately 4402-by-1600 pixels in size. Captured using the Resonon PIKA XC2 camera, these images span 462 spectral bands from 400 to 1000 nm.

- Categories:

639 Views

639 ViewsThis dataset includes 30 hyperspectral cloud images captured during the Summer and Fall of 2022 at Auburn University at Montgomery, Alabama, USA (Latitude N, Longitude W) using aResonon Pika XC2 Hyperspectral Imaging Camera. Utilizing the Spectronon software, the images were recorded with integration times between 9.0-12.0 ms, a frame rate of approximately 45 Hz, and a scan rate of 0.93 degrees per second. The images are calibrated to give spectral radiance in microflicks at 462 spectral bands in the 400 – 1000 nm wavelength region with a spectral resolution of 1.9 nm.

- Categories:

245 Views

245 Views

This dataset comprises SRTM (Shuttle Radar Topography Mission) DEM (Digital Elevation Model) data covering the Indian terrain, with a resolution of 90KM x 90KM. These datasets are instrumental in terrain analysis, accurately depicting elevations above sea level. By offering detailed topographical information, they facilitate various applications including land use planning, infrastructure development, and environmental modeling.

- Categories:

70 Views

70 Views

This dataset contains results of the 60 GHz indoor sensing measurement campaign using a bistatic OFDM radar based on 5G-specified positioning reference signals (PRSs). The data can be used for testing end-to-end indoor millimeter-wave radio positioning as well as simultaneous localization and mapping (SLAM) algorithms, including channel parameter estimation. Beamformed PRS with dense angular sampling in transmission and reception allows efficient capture of line-of-sight (LoS) as well as multipath components.

- Categories:

2169 Views

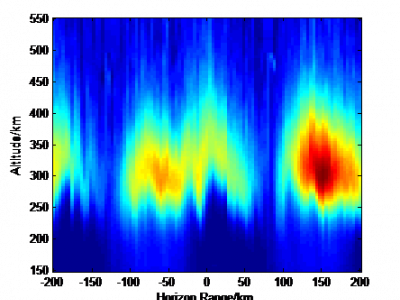

2169 ViewsA method is proposed to reduce the electron density irregularity by injecting chemicals into the scintillation region and attaching electrons by chemical reaction with the background ionosphere. A physical model of ionospheric scintillation mitigation based on the release of electron density depletion chemicals is established. The evolution process of electron density irregularities reduction, plasma instability growth rate and beacon amplitude phase fluctuation before and after scintillation mitigation were simulated.

- Categories:

205 Views

205 Views

The code and data for "The ES-GNF method of unstructured tetrahedral mesh for fast inversion of gravity and magnetic data with undulating terrain". This dataset includes unstructured tetrahedral mesh establishment and corresponding kernel matrix calculation program, and has gravity and magnetic anomaly data and inversion results of the model test part in manuscript 'The ES-GNF method of unstructured tetrahedral mesh for fast inversion of gravity and magnetic data with undulating terrain'.

- Categories:

61 Views

61 ViewsIn our ever-expanding world of advanced satellite and communications systems, there's a growing challenge for passive radiometer sensors used in the Earth observation like 5G. These passive sensors are challenged by risks from radio frequency interference (RFI) caused by anthropogenic signals. To address this, we urgently need effective methods to quantify the impacts of 5G on Earth observing radiometers. Unfortunately, the lack of substantial datasets in the radio frequency (RF) domain, especially for active/passive coexistence, hinders progress.

- Categories:

596 Views

596 Views

This dataset comprises multimission altimeter products, specifically multimission altimetry-derived cyclonic eddy trajectories in the South China Sea region (109°E-121°E, 3°N-23°N). These products are released by the Archiving, Validation and Interpretation of Satellite Oceanographic data (AVISO) with the support of the Copernicus Marine Environment Monitoring Service (CMEMS). The eddy trajectories are derived from the "all satellites" constellation maps distributed by CMEMS. The dataset is intended for the study of ocean mesoscale eddies.

- Categories:

65 Views

65 ViewsTo test the feasibility of the idea: Using the processed data of sentinel-2 and GlobeLand30 as the input image and ground truth of subspace clustering for land cover classification, a dataset named 'MSI_Gwadar' is created.

'MSI_Gwadar' is a multi-spectral remote sensing image of Gwadar (town and seaport, southwestern Pakistan) and its four regions of interest, which includes MATLAB data files and ground truth files of the study area and its four regions of interest.

- Categories:

313 Views

313 Views