Sensors

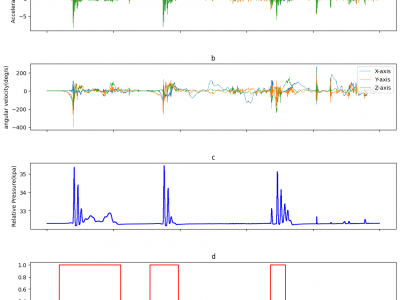

The data was collected by outfitting one of the players with the experimental balloon, which incorporated the embedded circuit and sensors. The sensors positioned at the top-right to the player within the bubble balloon, where a player stand inside. The sensors' data were collected at specific sampling frequencies (Accelerometer: 1000Hz, Gyroscope: 1000Hz, and Pressure: 40Hz). The experiment was conducted involving five different players. This approach allowed for the inclusion of diverse data samples, taking into account variations in player metrics, movements, and gameplay dynamics.

- Categories:

178 Views

178 ViewsThis share presents raw data and Python source code for signal processing in overflow velocity measurement using millimeter-wave MIMO-FMCW radar on a fabricated real-scale pseudo embankment. The dataset and code offer insights into developing robust river embankments, crucial for mitigating failures during heavy rains in Japan. The methodology involves constructing a pseudo embankment recommended by the Ministry of Land, Infrastructure, Transport, and Tourism (MLIT) for technology validation.

- Categories:

271 Views

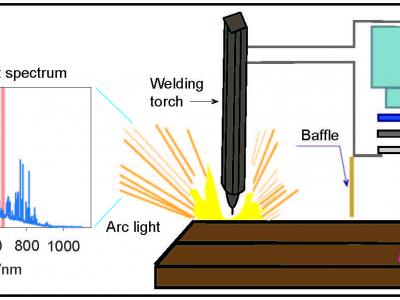

271 ViewsLaser-vision system (LVS), which provides the effective sensing of weld seams, is widely used in robotic welding. Due to the harsh visual noise during the welding process, the accurate real-time detection of weld seams is difficult. Since the optical propagation of the laser is affected by the arc light, the traditional passive vision system (PVS) filtering method for detecting weld seams is not applicable. This paper exploits the selection of an optimal imaging band for the LVS to eliminate the arc noise.

- Categories:

206 Views

206 Views

This dataset abstract presents findings from a study focused on enhancing surface crack detection in railroad safety through the development and optimization of a hybrid Eddy Current Testing (ECT) probe. Traditional ECT methods often encounter challenges related to 'lift-off noise', which arises from variations in probe-to-material distances. To mitigate this issue, the study introduces a novel probe design that integrates transmit and differential receiver coils, aimed at improving detection sensitivity and minimizing lift-off impact.

- Categories:

190 Views

190 Views

This dataset contains results of the 60 GHz indoor sensing measurement campaign using a bistatic OFDM radar based on 5G-specified positioning reference signals (PRSs). The data can be used for testing end-to-end indoor millimeter-wave radio positioning as well as simultaneous localization and mapping (SLAM) algorithms, including channel parameter estimation. Beamformed PRS with dense angular sampling in transmission and reception allows efficient capture of line-of-sight (LoS) as well as multipath components.

- Categories:

1726 Views

1726 ViewsPopularity of smartphones also popularized, reading content using smartphones. Reading using smartphones quite differs from reading using desktop system. Mouse and Keyboard are the peripherals associated with the reading in desktop systems. Study of the handling of such devices has led to provide implicit feedback of the content read. Similar study in smartphones to get implicit feedback remains to be a huge gap. Reading using smartphones involves screen gestures like pinch to zoom, tap, scroll, orientation change and screen capture.

- Categories:

148 Views

148 Views

The mechanical properties of fiber Bragg Grating (FBG) strain sensors in ultra-low temperature environments are very important for structural health monitoring (SHM) in the aerospace field. In this paper, two kinds of FBG temperature sensors and strain sensors were fabricated by femtosecond laser technology and ultrasonic metallization packaging process. The strain transfer mechanism, microstructure behavior, and macroscopic mechanical properties of the FBG strain sensors were discussed in detail and the corresponding theoretical models were constructed.

- Categories:

63 Views

63 Views

SeaIceWeather Dataset

This is the SeaIceWeather dataset, collected for training and evaluation of deep learning based de-weathering models. To the best of our knowledge, this is the first such publicly available dataset for the sea ice domain. This dataset is linked to our paper titled: Deep Learning Strategies for Analysis of Weather-Degraded Optical Sea Ice Images. The paper can be accessed at: https://doi.org/10.1109/jsen.2024.3376518

- Categories:

326 Views

326 Views

Various modes of transportation traverse our roadways, highlighting the importance of object classification for improving traffic safety. Optical sensors that rely on visual data encounter challenges in adverse weather conditions, where poor visibility hinders target classification. In this project we use an off-the-shelf millimeter wave Frequency Modulated Continuous Wave (FMCW) radar -- Texas Instruments IWR1843BOOST module to classify on road objects. By combining the radar module, Robot Operating System (ROS), and Python scripts, we extracted a dataset of 3D point cloud images.

- Categories:

76 Views

76 Views

The uploaded project is the code and dataset for Charging Efficiency Optimization Based on Swarm Reinforcement Learning under Dynamic Energy Consumption for WRSN. The details of each document in the uploaded project are as follows. Document data: The data file contains network data and simulation data. Document iostream: The iostream file contains the program for reading data and writing data. Document main: The main file contains the main program that executes the simulation. Document network: The network file

- Categories:

82 Views

82 Views