Sensors

As semiconductor devices have become increasingly miniaturized, the ability to control very small Critical Dimensions (CDs) during the etching process has become crucial through controlled plasma processes. Consequently, diagnosing plasma and reflecting this in the process to enhance yield is of paramount importance. Typically, an invasive sensor like a Single Langmuir Probe (SLP) is utilized for plasma diagnostics. However, using this sensor can affect the plasma, necessitating the use of non-invasive diagnostic methods.

- Categories:

74 Views

74 ViewsThe accurate distinction between line-of-sight (LOS) and non-line-of-sight (NLOS) propagation channels is paramount for precise distance measurement within ultra-wideband (UWB) indoor localization systems. In complex and dynamic environments, such as those encountered in the indoor positioning of autonomous mobile robots or vehicles, UWB signal propagation is particularly susceptible to NLOS conditions.

- Categories:

567 Views

567 ViewsRLED contains 80,400 images and corresponding events, we utilized a photometer to continuously measure scene illumination and calculate the illumination value after attenuation at the event camera. The capture scenes included city (35.0%), suburbs (10.3%), town (14.5%), village (17.8%), and valley (22.4%). Half of the RLED frames are captured at a frame rate of 25 fps, and the other half at 10 fps. The exposure time is set to 1ms, 3ms, and 5ms based on the varying environmental illumination.

- Categories:

194 Views

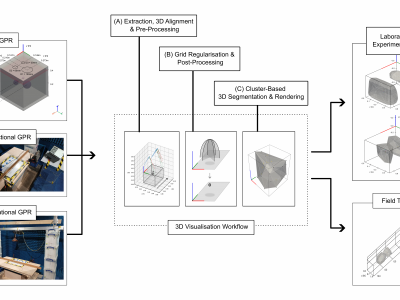

194 ViewsGround Penetrating Radar (GPR) facilitates the detection and localisation of subsurface structural anomalies in critical transport infrastructure (e.g. tunnels), better informing targeted maintenance strategies. However, conventional fixed-directional systems suffer from limited coverage - especially of less-accessible structural aspects (e.g. crowns) - alongside unclear visual output of anomaly spatial profiles, both for physical and simulated datasets.

- Categories:

536 Views

536 ViewsI would introduce the data we uploaded as follows: These data are the experimental fringe images taken by the experimental platform we built. These phase-shifting fringe images are divided into the fringe images required for calibration and the fringe images for measuring the target to be measured. The required images for calibration are the fringe images taken at different heights (different heights are: 15mm, 25mm, 30mm and 40mm).

- Categories:

327 Views

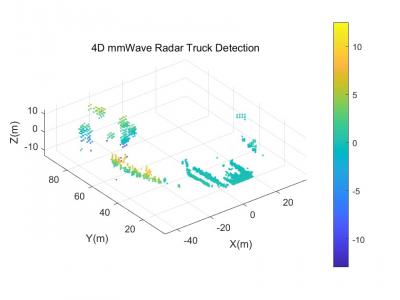

327 ViewsWe present a comprehensive multi-sensor dataset comprising 4D mmWave radar point clouds, lidar point clouds, and camera images in the open-pit mine. The dataset encompasses various operational scenarios, including the dumping site, loading site, connecting roads, and haulage maintenance area, under various lighting conditions such as cloudy, dark, daylight, and overcast skies.

- Categories:

396 Views

396 ViewsThese are the 16-step phase-shift fringe images and five gray code fringe images that we collected and applied to the experiment. To facilitate the comparison experiments, we also supplement the average intensity images.

In order to better compare the experimental results, we collected the images of no target, horizontal discontinuous target and continuous target respectively, so that the results can better reflect all kinds of situations, so as to make the results more extensive and illustrative.

- Categories:

242 Views

242 ViewsUltra-wideband radar (UWB) is capable of perceiving the surroundings irrespective of the visibility due to its broad frequency spectrum. Therefore, UWB technology can be employed in mobile robots to perform simultaneous localization and mapping (SLAM) in vision-denied environments (e.g. smoke, fog, walls with reflective surfaces). We chose four different environments to teleoperate a TurtleBot2 nonholonomic robot equipped with Novelda X4M300 monostatic radar modules and RPLIDAR-A2 laser range scanner(s).

- Categories:

139 Views

139 ViewsSupplementary video for the article "A Programmable CMOS DEP Chip for Cell Manipulation"

This video demonstrates the use of a CMOS DEP chip for particle manipulation, including particle patterning, concentration control, and single particle manipulation, all performed on the same chip and sample.

- Categories:

430 Views

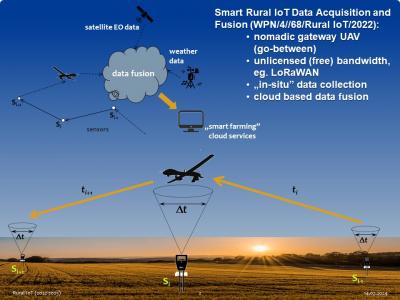

430 ViewsMeasurement campaign was performed across the entire growing season of 2023. Seven Arduino+LoRaWAN sensors were measuring soil moisture, temperature and pH, as well as solar irradiance in the radius of about 35 km around Gdansk, Poland. Raw data were being collected with a nomadic gateway aboard an UAV and transferred to the cloud for analysis (package part A). Based on the physics informed analysis of the underlying measurement processes anomalies were classified and parametrized.

- Categories:

706 Views

706 Views