Transportation

Abstract-In this paper a FSS-based absorber:with highabsorption effciency is proposed in the terahertz regime foruse in communications applications. It has the potential toenhance the performance of communication systems, minimizesignal interference, and guarantee the stability and effciencyof data transmission, The structure of this terahertz absorbercomprises annular patches and shaped patches.

- Categories:

261 Views

261 Views

Modern automotive embedded systems include a large number of electronic control units (ECU) responsible for managing sophisticated systems such as engine control, ABS brake systems, traction control, and power steering systems. To ensure the reliability and effectiveness of these functions, it is essential to apply rigorous test approaches and standards. The integration of diagnostic functions in automotive embedded systems demands consistent tests and a detailed analysis of data.

- Categories:

173 Views

173 Views

This dataset provides valuable insights into Received Signal Reference Power (RSRP) measurements collected by User Equipment (UE) devices strategically positioned within a moving train, featuring the hexagonal frequency selective pattern on its windows. Additionally, it includes RSRP values obtained from an external reference source using the rooftop train antenna.

All the data in this dataset corresponds to the research conducted in our work titled "Enhancing Mobile Communication on Railways: Impact of Train Window Size and Coating".

- Categories:

336 Views

336 ViewsThis is the collection of the Ecuadorian Traffic Officer Detection Dataset. This can be used mainly on Traffic Officer detection projects using YOLO. Dataset is in YOLO format. There are 1862 total images in this dataset fully annotated using Roboflow Labeling tool. Dataset is split as follow, 1734 images for training, 81 images for validation and 47 images for testing. Dataset is annotated only as one class-Traffic Officer (EMOV). The dataset produced a Mean Average Precision(mAP) of 96.4 % using YOLOv3m, 99.0 % using YOLOv5x and 98.10 % using YOLOv8x.

- Categories:

383 Views

383 Views

Despite the existence of road image datasets, these datasets predominantly focus on European roads with less variability in traffic and road conditions. To address this limitation, we have developed an image dataset tailored to Indian road conditions, capturing the extensive variations in traffic and environment.

- Categories:

413 Views

413 Views

This archive includes three popular traffic datasets: Abilene, GEANT, and TaxiBJ.

Abilene and GEANT is network traffic datsets and TaxiBJ is urban traffic datset.

This archive includes three popular traffic datasets: Abilene, GEANT, and TaxiBJ.

Abilene and GEANT is network traffic datsets and TaxiBJ is urban traffic datset.

This archive includes three popular traffic datasets: Abilene, GEANT, and TaxiBJ.

Abilene and GEANT is network traffic datsets and TaxiBJ is urban traffic datset.

- Categories:

1557 Views

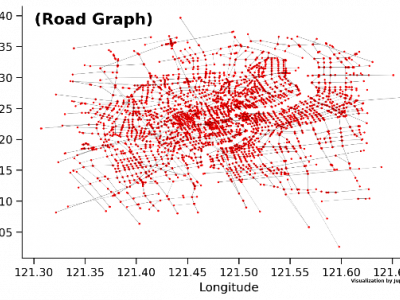

1557 ViewsThe SH_traffic dataset presents comprehensive traffic speed data for the Shanghai urban area, collected over a period from January 25, 2022, to February 9, 2022. The dataset encompasses a square area of 900 km² and consists of 4,500 graph nodes representing road intersections across the city. The dataset includes traffic speed information recorded at regular intervals, along with related data such as geographical entity attributes and relationships between entities within the road network.

- Categories:

135 Views

135 ViewsDIRS24.v1 presents a dataset captured in campus environment. These images are curated suitably for the utilization in developing perception modules. These modules can be very well employed in Advanced Driver Assistance Systems (ADAS). The images of dataset are annotated in diversified formats such as COCO-MMDetection, Pascal-VOC, TensorFlow, YOLOv7-PyTorch, YOLOv8-Oriented Bounding Box, and YOLOv9.

- Categories:

602 Views

602 Views

Achieving robust path tracking is essential for efficiently operating autonomous driving systems, particularly in unpredictable environments. This paper introduces a novel path-tracking control methodology utilizing a variable second-order Sliding Mode Control (SMC) approach. The proposed control strategy addresses the challenges posed by uncertainties and disturbances by reconfiguring and expanding the state-space matrix of a kinematic bicycle model guaranteeing Lyapunov stability and convergence of the system.

- Categories:

33 Views

33 Views