Computer Vision

Fabdepth HMI is designed for hand gesture detection for Human Machine Interaction. It contains total of 8 gestures performed by 150 different individuals. These individuals range from toddlers to senior citizens which adds diversity in this dataset. These gestures are available in 3 different formats namely resized, foreground=-background separated and depth estimated images. Additional aspect is added in terms of video format of 150 samples. Researchers may choose their combination of data modalities based on their application.

- Categories:

279 Views

279 ViewsSolar energy production has grown significantly in recent years in the European Union (EU), accounting for 12\% of the total in 2022. The growth can be attributed to the increasing adoption of solar photovoltaic (PV) panels, which have become cost-effective and efficient means of energy production, supported by government policies and incentives. The maturity of solar technologies has also led to a decrease in the cost of solar energy, making it more competitive with other energy sources.

- Categories:

969 Views

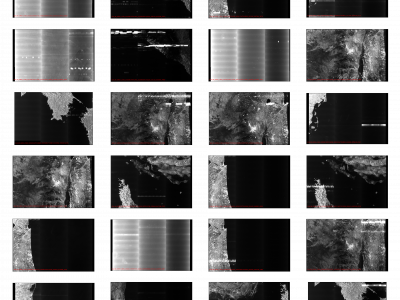

969 ViewsSynthetic Aperture Radar (SAR) satellite images are used increasingly more for Earth observation. While SAR images are useable in most conditions, they occasionally experience image degradation due to interfering signals from external radars, called Radio Frequency Interference (RFI). RFI affected images are often discarded in further analysis or pre-processed to remove the RFI.

- Categories:

330 Views

330 Views

As a common dataset for change detection, its image can be divided into three parts: the image before the change, the image after the change, and the label image showing the changed area. This dataset is characterized by significant seasonal differences between bi-temporal image pairs, which makes up for some of the deficiencies in existing datasets. The labels for this dataset include some irregular changes, such as the appearance and disappearance of cars; but do not include seasonal changes, such as changes in the ground surface caused by snowfall.

- Categories:

1024 Views

1024 ViewsOne of the most consequential creations in the human evolution phase is handwriting. Due to writing, today we are conveying our reflections, making business pacts, rendering an understandable world and making hitherto tasks austerer. Determining gender using offline handwriting is an applied research problem in forensics, psychology, and security applications, and with technological evolution, the need is growing. The general problem of gender detection from handwriting poses many difficulties resulting from interpersonal and intrapersonal differences.

- Categories:

1188 Views

1188 Views

MODI script is one of the oldest written formsofmedia.Mostoftheearlywrittenknowledgeonsubjectslikemedicine, Buddhist ideology, food habits and horoscope has been written using MODI script. MODI Script was in use during Yadavas to Chhatrapati Shivaji Maharaj Era for writing administrative and government documents. Further, during Peshawas era, MODI Script was extensively used. Even during British rule, MODI Script uses are found. It started to diminish the use of MODI Script from 1960 onwards.

- Categories:

1149 Views

1149 ViewsCAD-EdgeTune dataset is acquired using a Husarion ROSbot 2.0 and ROSbot 2.0 Pro with the collection speed set to 5 frames per second from a suburban university environment. We may split the information into subgroups for noon, dusk, and dawn in order to depict our surroundings under various lighting situations. We have assembled 17 sequences totaling 8080 frames, of which 1619 have been manually analyzed using an open-source pixel annotation program. Since nearby photographs are highly similar to one another, we decide to annotate every five images.

- Categories:

178 Views

178 Views

42 stimulus pictures are presented separately on the screen in the same sequences for all participants, including landscapes, people, social scenes and composite pictures. The eye tracker records the participants' gaze data on the stimulus pictures. Based on the gaze fixation position and duration, the fixation map could be visualized. We applies a 2-d convolution with a gauss filter on the fixation maps to get the visual heatmaps. The participants consist of schizophrenic patients and healthy controls.

- Categories:

25 Views

25 Views

This dataset provides the high-resolution remote senisng data regarding various coastline scenes.

- Categories:

316 Views

316 Views