Security

With the wide adoption, Linux-based IoT devices have emerged as one primary target of today’s cyber attacks. While traditional malware-based attacks (e.g., Mirai) can quickly spread across these devices, they are well-understood threats with defense techniques such as malware fingerprinting coupled with community-based fingerprint sharing. Recently, fileless attacks—attacks that do not rely on malware files—have been increasingly occurring on Linux-based IoT devices.

- Categories:

119 Views

119 ViewsThis dataset was created to develop and test firmware attestation techniques for embedded IoT swarms using Static Random Access Memory (SRAM). It contains sequential, synchronous SRAM traces collected from four-node and six-node IoT swarms of devices, each with a 2KB SRAM. Each device is loaded with "normal" or "tampered" firmware to create different network scenarios. Swarm-1 is a four-node network encompassing thirteen scenarios, including two normal network states, two physical twin states, and nine anomalous states.

- Categories:

563 Views

563 Views

This 5G dataset that can be used to advance research in attack detection in 5G service based architecture was generated using the open-source Free5GC testbed and UERANSIM, a UE/RAN simulator. The dataset include benign traffic featuring 5G procedures (e.g., registration, deregistration, PDU session establishment/release, uplink, downlink) along with 6 different HTTP/2 attack simulated between different 5G network functions. including:

- Categories:

800 Views

800 ViewsThis dataset comprises qualitative and quantitative data collected from a comprehensive study evaluating the prevalence and types of social engineering vulnerabilities within Tanzanian higher learning institutions. The data was gathered through surveys and structured interviews with 395 participants, including students, academic staff, and administrative staff.

- Categories:

618 Views

618 Views

There are parts of datasets used in paper <ADFLOW: Integrated and Comprehensive Ad Detection Considering Relationships Among Webpage Elements>, including SITE-D/IMG-D/TEXT-D and some PageGraphs extracted from websites in SITE-D.

The IMG-D dataset is large, and we have not yet finished organizing it, so it only includes a portion. Similarly, due to the extensive size of the entire PageGraph dataset used in our experiments, we have only uploaded the PageGraph of a few hundred websites.

- Categories:

148 Views

148 ViewsAnomaly detection in Phasor Measurement Unit (PMU) data requires high-quality, realistic labeled datasets for algorithm training and validation. Obtaining real field labelled data is challenging due to privacy, security concerns, and the rarity of certain anomalies, making a robust testbed indispensable. This paper presents the development and implementation of a Hardware-in-the-Loop (HIL) Synchrophasor Testbed designed for realistic data generation for testing and validating PMU anomaly detection algorithms.

- Categories:

1489 Views

1489 Views

This dataset is generated for the purpose of developing and testing attestation techniques for IoT devices. The dataset consists of RAM traces for eight different firmwares including traces for running the legitimate firmware as well as tampered versions of the firmwares. we upload the firmware onto the IoT device and allow it to operate for a predefined time period of 300 seconds. Throughout the device's normal operation, we utilize the gateway node to collect numerous RAM trace samples, each comprising 2048 bytes, with randomized intervals between consecutive samples.

- Categories:

173 Views

173 Views

This dataset serves as replication package of the article "Migrating Software Systems towards Post-Quantum Cryptography - A Systematic Literature Review".

In the article, we conducted a systematic literature review which contains different phases of the search and selection procedure.

These different stages are described in detail by this replication package in order to reproduce our results.

- Categories:

105 Views

105 Views

This dataset comprises over 38,000 seed inputs generated from a range of Large Language Models (LLMs), including ChatGPT-3.5, ChatGPT-4, Claude-Opus, Claude-Instant, and Gemini Pro 1.0, specifically designed for the application in fuzzing Python functions. These seeds were produced as part of a study evaluating the utility of LLMs in automating the creation of effective fuzzing inputs, a method crucial for uncovering software defects in the Python programming environment where traditional methods show limitations.

- Categories:

227 Views

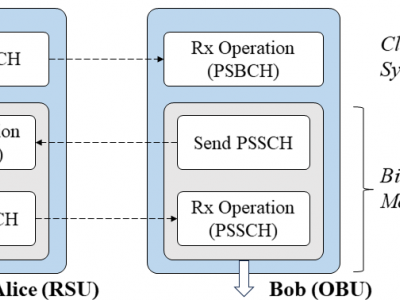

227 ViewsTo test the reciprocity of V2X channel, bidirectional channel state information (CSI) measurement is conducted between Alice (RSU) and Bob (OBU) through PSSCH signal. We utilized two USRP X310 SDR platforms equipped with the CBX daughter board as Alice and Bob. Despite the designed fast USRP transceiver switching, there is a signal transmission delay of approximately 0.3 ms, resulting in a gap of about 4 to 5 symbols in the PSSCH subframe actually received by Alice and Bob.

- Categories:

516 Views

516 Views