Image Processing

This dataset is collected from Kaggle (https://www.kaggle.com/datasets/nazmul0087/ct-kidney-dataset-normal-cyst-tumor-and-stone ). The dataset was collected from PACS (Picture archiving and communication system) from different hospitals in Dhaka, Bangladesh where patients were already diagnosed with having a kidney tumor, cyst, normal or stone findings.

- Categories:

259 Views

259 Views

This dataset (samplePointsCities_20240811_harmonized.csv) was used for the Rescaled Water Index (RWI) proposal. The GitHub page (https://github.com/edujusti/Rescaled-Water-Index-RWI) contains the Python and JavaScript (Google Earth Engine) scripts used for data production, statistical analyses, and result visualization of the RWI spectral index.

This spectral index is a modification of MNDWI to enhance the mappings of water surfaces.

- Categories:

136 Views

136 Views

This dataset contains still thermal frames from thirty patients undergoing awake craniotomy for brain tumor resection. The data were used as part of a study on automated craniotomy masking, where the portion of the craniotomy image which contans the brain is identified. The data contains manually generated gold-standard masks, as well as masks created with the proposed method in "Automated Craniotomy Masking for Intraoperative Thermography".

- Categories:

82 Views

82 ViewsThe ultrasound video data were collected from two sets of neck ultrasound videos of ten healthy subjects at the Ultrasound Department of Longhua Hospital Affiliated to Shanghai University of Traditional Chinese Medicine. Each subject included video files of two groups of LSCM, LSSCap, RSCM, and RSSCap. The video format is avi.

The MRI training data were sourced from three hospitals: Longhua Hospital, Shanghai University of Traditional Chinese Medicine; Huadong Hospital, Fudan University; and Shenzhen Traditional Chinese Medicine Hospital.

- Categories:

575 Views

575 Views

This dataset comprises high-resolution imaging data of biological porcine, clinically approved porcine and bovine, and chick embryo heart tissues. The dataset includes comprehensive anatomical and structural details, making it valuable for research in cardiovascular biology, tissue engineering, and computational modeling. The porcine and bovine heart samples are clinically approved, ensuring relevance for translational and preclinical studies. The chick embryo heart data provides insights into early cardiac development.

- Categories:

99 Views

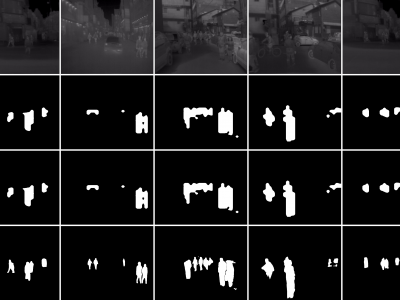

99 ViewsThese are tight pedestrian masks for the thermal images present in the KAIST Multispectral pedestrian dataset, available at https://soonminhwang.github.io/rgbt-ped-detection/

Both the thermal images themselves as well as the original annotations are a part of the parent dataset. Using the annotation files provided by the authors, we develop the binary segmentation masks for the pedestrians, using the Segment Anything Model from Meta.

- Categories:

618 Views

618 ViewsWe introduce two novel datasets for cell motility and wound healing research: the Wound Healing Assay Dataset (WHAD) and the Cell Adhesion and Motility Assay Dataset (CAMAD). WHAD comprises time-lapse phase-contrast images of wound healing assays using genetically modified MCF10A and MCF7 cells, while CAMAD includes MDA-MB-231 and RAW264.7 cells cultured on various substrates. These datasets offer diverse experimental conditions, comprehensive annotations, and high-quality imaging data, addressing gaps in existing resources.

- Categories:

963 Views

963 Views

This dataset consists of radiation pattern images of three distinct antennas: Patch, Monopole, and Dipole, sourced from existing literature. The database was developed using pixel sampling techniques to generate a large and diverse set of images. These images were further processed to include various geometric shapes, such as symmetric and asymmetric forms, as well as triangle and square shapes, with window sizes ranging from 2 × 2 to 100 × 100 pixels.

- Categories:

183 Views

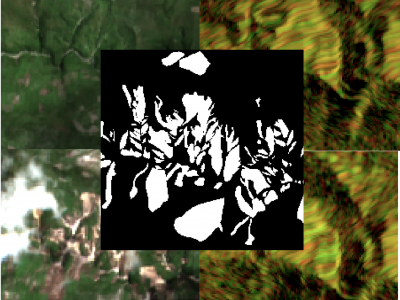

183 ViewsThe detection of the collapse of landslides trigerred by intense natural hazards, such as earthquakes and rainfall, allows rapid response to hazards which turned into disasters. The use of remote sensing imagery is mostly considered to cover wide areas and assess even more rapidly the threats. Yet, since optical images are sensitive to cloud coverage, their use is limited in case of emergency response. The proposed dataset is thus multimodal and targets the early detection of landslides following the disastrous earthquake which occurred in Haiti in 2021.

- Categories:

998 Views

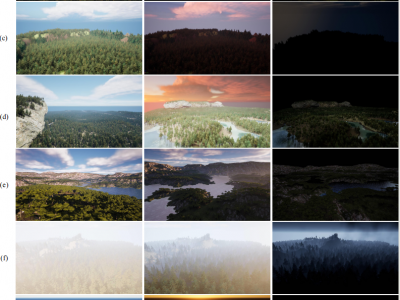

998 ViewsForest wildfires are one of the most catastrophic natural disasters, which poses a severe threat to both the ecosystem and human life. Therefore, it is imperative to implement technology to prevent and control forest wildfires. The combination of unmanned aerial vehicles (UAVs) and object detection algorithms provides a quick and accurate method to monitor large-scale forest areas.

- Categories:

1356 Views

1356 Views