CURE-TSD: Challenging Unreal and Real Environment for Traffic Sign Detection

- Citation Author(s):

-

Dogancan Temel (Georgia Institute of Technology)Tariq Alshawi (King Saud Univeristy)Min-Hung Chen (Georgia Institute of Technology)Ghassan AlRegib (Georgia Institute of Technology)

- Submitted by:

- Ghassan AlRegib

- Last updated:

- DOI:

- 10.21227/en9z-mq69

- Data Format:

- Links:

Abstract

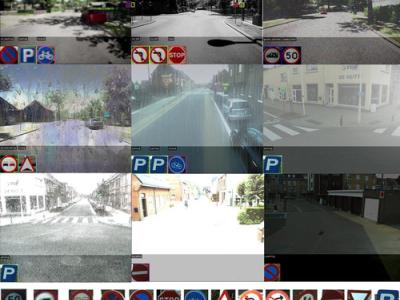

As one of the research directions at OLIVES Lab @ Georgia Tech, we focus on the robustness of data-driven algorithms under diverse challenging conditions where trained models can possibly be depolyed. To achieve this goal, we introduced a large-sacle (~1.72M frames) traffic sign detection video dataset (CURE-TSD) which is among the most comprehensive datasets with controlled synthetic challenging conditions. The video sequences in the CURE-TSD dataset are grouped into two classes: real data and unreal data. Real data correspond to processed versions of sequences acquired from real world. Unreal data corresponds to synthesized sequences generated in a virtual environment. There are 49 real sequences and 49 unreal sequences that do not include any specific challenge. We separated the sequences into 70% and %30 splits. Therefore, we have 34 training videos and 15 test videos in both real and unreal sequences that are challenge-free. There are 300 frames in each video sequence. There are 49 challenge-free real video sequences processed with 12 different types of effects and 5 different challenge levels, which result in 2,989 (49125+49) video sequences. Moreover, there are 49 synthesized video sequences processed with 11 different types of effects and 5 different challenge levels, which leads to 2,744 (49115+49) video sequences. In total, there are 5,733 video sequences, which include around 1.72 million frames. Please refer to our GitHub page for code, papers, and more information.

Instructions:

The name format of the video files are as follows: “sequenceType_sequenceNumber_challengeSourceType_challengeType_challengeLevel.mp4”

· sequenceType: 01 – Real data 02 – Unreal data

· sequenceNumber: A number in between [01 – 49]

· challengeSourceType: 00 – No challenge source (which means no challenge) 01 – After affect

· challengeType: 00 – No challenge 01 – Decolorization 02 – Lens blur 03 – Codec error 04 – Darkening 05 – Dirty lens 06 – Exposure 07 – Gaussian blur 08 – Noise 09 – Rain 10 – Shadow 11 – Snow 12 – Haze

· challengeLevel: A number in between [01-05] where 01 is the least severe and 05 is the most severe challenge.

Test Sequences

We split the video sequences into 70% training set and 30% test set. The sequence numbers corresponding to test set are given below:

[01_04_x_x_x, 01_05_x_x_x, 01_06_x_x_x, 01_07_x_x_x, 01_08_x_x_x, 01_18_x_x_x, 01_19_x_x_x, 01_21_x_x_x, 01_24_x_x_x, 01_26_x_x_x, 01_31_x_x_x, 01_38_x_x_x, 01_39_x_x_x, 01_41_x_x_x, 01_47_x_x_x, 02_02_x_x_x, 02_04_x_x_x, 02_06_x_x_x, 02_09_x_x_x, 02_12_x_x_x, 02_13_x_x_x, 02_16_x_x_x, 02_17_x_x_x, 02_18_x_x_x, 02_20_x_x_x, 02_22_x_x_x, 02_28_x_x_x, 02_31_x_x_x, 02_32_x_x_x, 02_36_x_x_x]

The videos with all other sequence numbers are in the training set. Note that “x” above refers to the variations listed earlier.

The name format of the annotation files are as follows: “sequenceType_sequenceNumber.txt“

Challenge source type, challenge type, and challenge level do not affect the annotations. Therefore, the video sequences that start with the same sequence type and the sequence number have the same annotations.

· sequenceType: 01 – Real data 02 – Unreal data

· sequenceNumber: A number in between [01 – 49]

The format of each line in the annotation file (txt) should be: “frameNumber_signType_llx_lly_lrx_lry_ulx_uly_urx_ury”. You can see a visual coordinate system example in our GitHub page.

· frameNumber: A number in between [001-300]

· signType: 01 – speed_limit 02 – goods_vehicles 03 – no_overtaking 04 – no_stopping 05 – no_parking 06 – stop 07 – bicycle 08 – hump 09 – no_left 10 – no_right 11 – priority_to 12 – no_entry 13 – yield 14 – parking

5470 views

5470 views