Skeleton datasets for Normal, Antalgic, Stiff legged, Lurching, Steppage, and Trendelenburg gaits.

- Categories:

Skeleton datasets for Normal, Antalgic, Stiff legged, Lurching, Steppage, and Trendelenburg gaits.

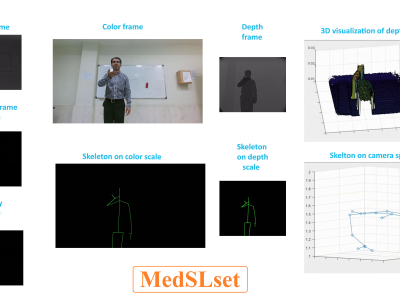

The dataset contains medical signs of the sign language including different modalities of color frames, depth frames, infrared frames, body index frames, mapped color body on depth scale, and 2D/3D skeleton information in color and depth scales and camera space. The language level of the signs is mostly Word and 55 signs are performed by 16 persons two times (55x16x2=1760 performance in total).

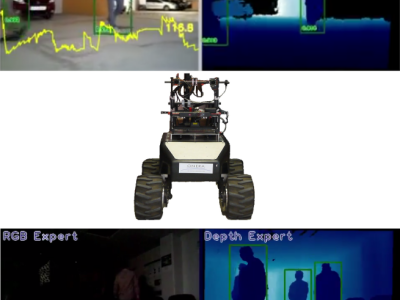

We introduce a new robotic RGBD dataset with difficult luminosity conditions: ONERA.ROOM. It comprises RGB-D data (as pairs of images) and corresponding annotations in PASCAL VOC format (xml files)

It aims at People detection, in (mostly) indoor and outdoor environments. People in the field of view can be standing, but also lying on the ground as after a fall.

Wearable Inertial Measurement Units (IMU) measuring acceleration, earth magnetic field and gyroscopic measurements can be considered for capturing human skeletal postures in real time. This dataset contains IMU readings (accelerometer, magnetometer and gyroscope) for common shoulder exercises: extension- flexion and abduction-adduction and simultaneously measures VICON readings and Kinect readings.