agriculture

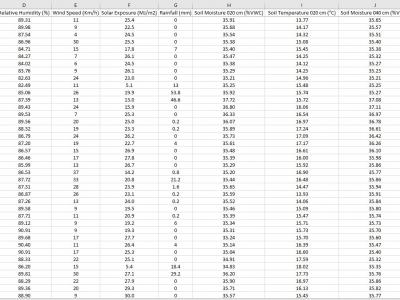

The data is collected from the deployed IoT sensor node at a pilot farm in Narrabri, Australia. The dataset includes information about soil characteristics such as soil moisture and soil temperature at 20-40-60 cm depth. The sensor node also provides information about environmental influencers, which are critical in constructing machine learning models to predict Evapotranspiration in diverse soil and environmental conditions.

- Categories:

831 Views

831 Views

The Nematode Detection Dataset is a comprehensive collection of 1,368 high-quality microscope images specifically curated for the advancement of agricultural pest management through machine learning. This dataset has been meticulously assembled to aid in the detection, identification, and analysis of four key types of nematodes that are critical to global agriculture: Meloidogyne (Root-knot nematodes), Globodera pallida (Potato cyst nematodes), Pratylenchus (Root-lesion nematodes), and Ditylenchus (Stem nematodes).

- Categories:

167 Views

167 ViewsThis dataset contains both the artificial and real flower images of bramble flowers. The real images were taken with a realsense D435 camera inside the West Virginia University greenhouse. All the flowers are annotated in YOLO format with bounding box and class name. The trained weights after training also have been provided. They can be used with the python script provided to detect the bramble flowers. Also the classifier can classify whether the flowers center is visible or hidden which will be helpful in precision pollination projects.

- Categories:

492 Views

492 Views

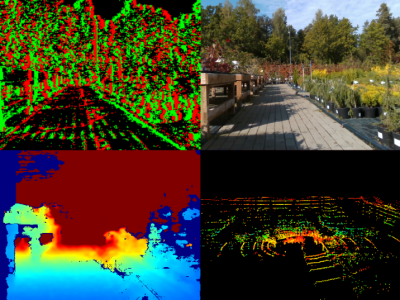

Lettuce Farm SLAM Dataset (LFSD) is a VSLAM dataset based on RGB and depth images captured by VegeBot robot in a lettuce farm. The dataset consists of RGB and depth images, IMU, and RTK-GPS sensor data. Detection and tracking of lettuce plants on images are annotated with the standard Multiple Object Tracking (MOT) format. It aims to accelerate the development of algorithms for localization and mapping in the agricultural field, and crop detection and tracking.

- Categories:

714 Views

714 ViewsIn agriculture, the development of early treatment techniques for plant leaf diseases can be significantly enhanced by employing precise and rapid automatic detection methods. Within this realm of research, two common scenarios encountered in real field cases are the identification of different severity stages of diseases and the detection of multiple pathogens simultaneously affecting a single plant leaf. One major challenge faced in this area is the lack of publicly available datasets that contain images captured under these specific conditions.

- Categories:

513 Views

513 ViewsGuava fruit production is one of the main sources of economic growth in Asian countries, the world production of guava in 2019 was 55 million tons. Guava disease is an important factor in economic loss as well as quantity and quality of guava. The original guava fruit disease dataset consist of 38 images of phytophthora, 30 images of root and 34 images of scab guava disease with 650x650x3 pixel.

- Categories:

1314 Views

1314 ViewsA new generation of computer vision, namely event-based or neuromorphic vision, provides a new paradigm for capturing visual data and the way such data is processed. Event-based vision is a state-of-art technology of robot vision. It is particularly promising for use in both mobile robots and drones for visual navigation tasks. Due to a highly novel type of visual sensors used in event-based vision, only a few datasets aimed at visual navigation tasks are publicly available.

- Categories:

1220 Views

1220 ViewsA new generation of computer vision, namely event-based or neuromorphic vision, provides a new paradigm for capturing visual data and the way such data is processed. Event-based vision is a state-of-art technology of robot vision. It is particularly promising for use in both mobile robots and drones for visual navigation tasks. Due to a highly novel type of visual sensors used in event-based vision, only a few datasets aimed at visual navigation tasks are publicly available.

- Categories:

1384 Views

1384 Views