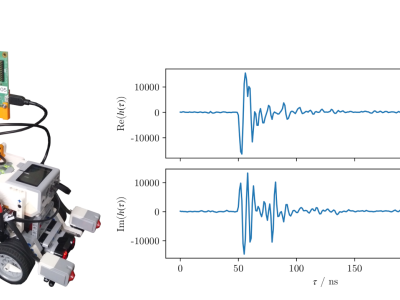

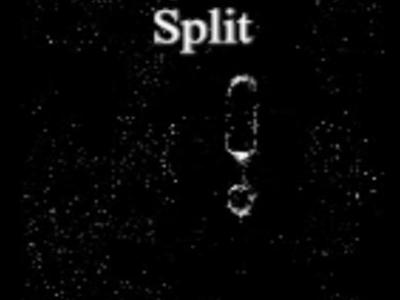

Digital microfluidics are a unique technique for operation of nano-to-micro liter droplets based on electrowetting on dielectric. It has great application potential in the field on clinic diagnosis, life science and environment monitoring. Due to the fast droplet moving speed and high degree of freedom for droplet manipulation, it is urgent to develop automated and intelligent approaches for droplet monitoring and control.

- Categories: