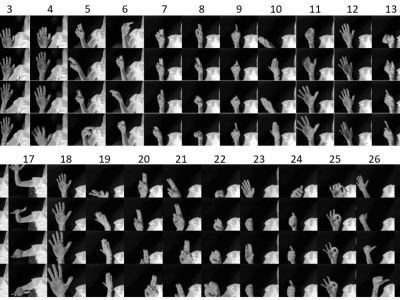

For gesture recognition, radar sensors provide a unique alternative to other input devices, such as cameras or motion sensors. They combine a low sensitivity to lighting conditions, an ability to see through surfaces, and user privacy preservation, with a small form factor and low power usage. However, radar signals can be noisy, complex to analyze, and do not transpose from one radar to another.

- Categories: