computer vision; object tracking; segmentation

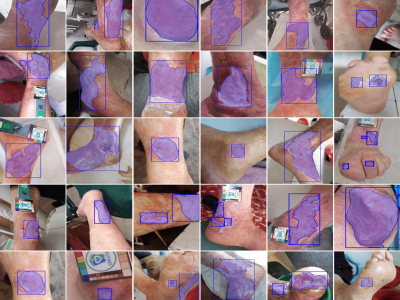

Chronic wounds pose an ongoing health concern globally, largely due to the prevalence of conditions such as diabetes and leprosy's disease. The standard method of monitoring these wounds involves visual inspection by healthcare professionals, a practice that could present challenges for patients in remote areas with inadequate transportation and healthcare infrastructure. This has led to the development of algorithms designed for the analysis and follow-up of wound images, which perform image-processing tasks such as classification, detection, and segmentation.

- Categories:

1328 Views

1328 ViewsQuantifying performance of methods for tracking and mapping tissue in endoscopic environments is essential for enabling image guidance and automation of medical interventions and surgery. Datasets developed so far either use rigid environments, visible markers, or require annotators to label salient points in videos after collection. These are respectively: not general, visible to algorithms, or costly and error-prone. We introduce a novel labeling methodology along with a dataset that uses said methodology, Surgical Tattoos in Infrared (STIR).

- Categories:

2534 Views

2534 Views

Dronescape presents a dataset comprising 25 drone videos showcasing vast areas filled with trees, rivers, and mountains. The dataset includes two subsets: 25 videos with tree segmentation and 25 videos without tree segmentation, offering diverse perspectives on the presence and absence of segmented tree regions. The dataset focuses on highlighting the regions containing trees using the SAM (Segment Anything Model) and Track Anything library. Video object tracking and segmentation techniques are utilized to track the regions of trees throughout the dataset.

- Categories:

735 Views

735 Views