Carbon sequestration

- Citation Author(s):

- Submitted by:

- Mohamad Awad

- Last updated:

- DOI:

- 10.21227/fney-ys26

- Data Format:

- Research Article Link:

Abstract

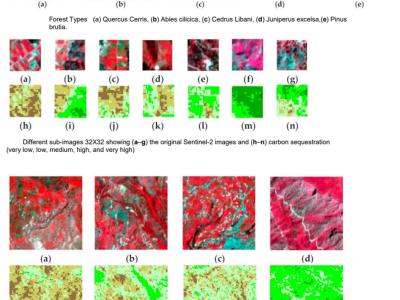

We used Sentinel-2 images to create the dataset In order to estimate sequestered carbon in the above-ground forest Biomass. Moreover, fieldwork was completed to gather related forest biomass volume. The clipped image has a size of 1115 × 955 pixels and consists of bands 3, 4, and 8, which correspond to green, red, and near-infrared. These bands were selected for two reasons: they have the highest spatial resolution, and they are representative of the crops’ photosynthesis process. We created different datasets that consisted of tiled sub-images with three different sizes of 32 × 32 (1050 images), 64 × 64 (270 images), and 128 × 128 (72 images) and three bands representing different spectrums (green, red, and near-infrared). The other datasets consisted of the same size and number of tiles but only represent carbon sequestration with six classes (no carbon, very low, low, moderate, high, and very high). A script was written in the Python language to classify the Sentinel-2 sub-images into six classes based on the computed canopy density statistics.

In brief, each dataset has different number of images and a different size. Each dataset consists of raw Sentinel sub-images and categorized training data.

Instructions:

There are two directories "Satellite_tiles" and "Train_64_by_64". In the first directory, one can find 270 images of size 64X64 representing 3-band Sentinel-2 images as described in the abstract. In the second directory, two sub-directories represent two formats JPG and CSV. The user can select the one most suitable for solving the problem of estimating carbon sequestration. The first directory can be used as the X-train and X-test while the second directory can be used as Y-train and Y-test in the model.

X-train, X-test, Y-train, Y-test = train_test_split(Satellite_tiles[0:270], Train[0:270, 0:6],random_state=2020,test_size=0.25) # 6 in Train represents 6 classes of carbon sequestration.

The same applies to the second and third dataset where there are two directories in each "Satellite_tiles" and "Train_32_by_32" or "Train_128_by_128" .

All the above-mentioned datasets are in one compressed file called ALL_Datasets_Carbon.zip

Programs written in Python language can be found in the following GitHub repository:

https://github.com/ma850419/Flexible_Net/tree/main

The programs can be used to train deep learning programs using the carbon sequestration datasets

1123 views

1123 views

In reply to Hello by Miguel Angel Suarez

Thank you Miguel