Dataset Entries from this Author

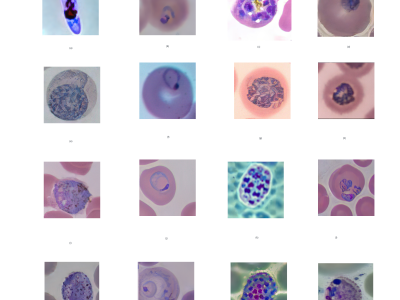

The MalariaSD dataset encompasses diverse stages and classes of malaria parasites, including Plasmodium falciparum, Plasmodium malariae, Plasmodium vivax, and Plasmodium ovale, categorized into four phases: ring, schizont, trophozoite, and gametocyte.

- Categories:

-

The dataset comprises a diverse collection of images featuring windows alongside various artificial light sources, such as bulbs, LEDs, and tube lights. Each image captures the interplay of natural and artificial illumination, offering a rich visual spectrum that encompasses different lighting scenarios. This compilation is invaluable for applications ranging from architectural design and interior decor to computer vision and image processing.

- Categories:

-

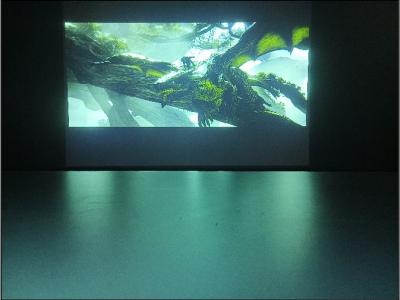

Dronescape presents a dataset comprising 25 drone videos showcasing vast areas filled with trees, rivers, and mountains. The dataset includes two subsets: 25 videos with tree segmentation and 25 videos without tree segmentation, offering diverse perspectives on the presence and absence of segmented tree regions. The dataset focuses on highlighting the regions containing trees using the SAM (Segment Anything Model) and Track Anything library. Video object tracking and segmentation techniques are utilized to track the regions of trees throughout the dataset.

- Categories:

-

With the increasing use of drones for surveillance and monitoring purposes, there is a growing need for reliable and efficient object detection algorithms that can detect and track objects in aerial images and videos. To develop and test such algorithms, datasets of aerial videos captured from drones are essential.

- Categories:

-

The Dataset consists of two videos, one recorded with blindfold on and the other without blindfold recorded using a 1080p Intel RealSense depth camera. It contains the videos, images extracted using ffmpeg and processed video which is made of a video with skipped frames created using ffmpeg. The scope of the dataset is for machine vision purposes to allow for tasks such as instance segmentation. A hat fixed on the head of a blindfolded person is used to record walking activities.

- Categories:

-

The Autofocus Projector Dataset is a collection of 555 images and 150 videos captured while projecting images and videos with varying levels of Gaussian blur. The dataset includes images and videos of different blur levels, ranging from fully focused to the maximum levels of left and right Gaussian blur as per the projector's specifications. The dataset was recorded using a Redmi Note 11T 5G mobile camera with a 50 MP, f/1.8, 26mm (wide) sensor, PDAF image camera, and 1080p@30 fps video camera.

- Categories:

-

A mobile sensor can be described as a kind of smart technology that can capture minor or major changes in an environment and can respond by performing a particular task. The scope of the dataset is for forensic purposes that will help segregate day-to-day activities from criminal actions. Smartphones supplied with sensors can be utilised for monitoring and recording simple daily activities such as walking, climbing stairs, eating and more. For the generation of this dataset, we have collected data for 13 classes of daily life activities, which has been done by a single individual.

- Categories:

-