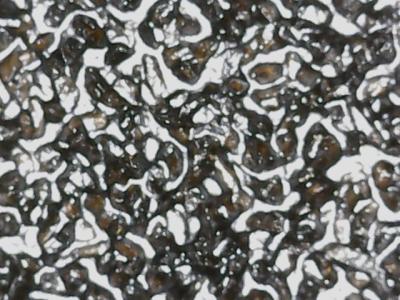

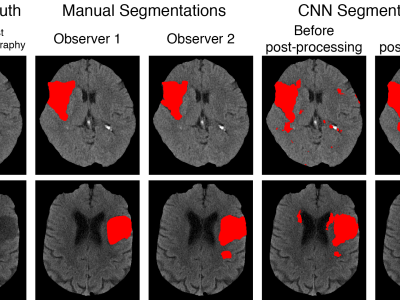

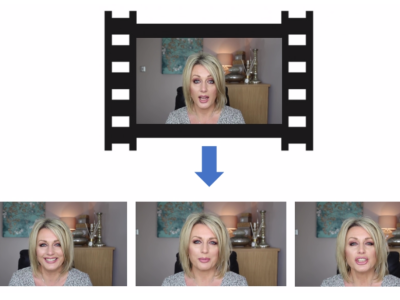

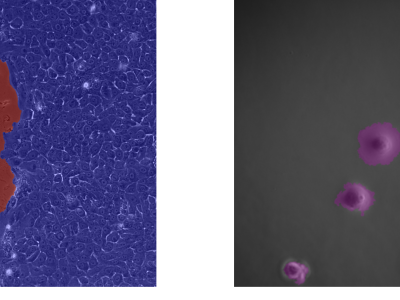

We introduce two novel datasets for cell motility and wound healing research: the Wound Healing Assay Dataset (WHAD) and the Cell Adhesion and Motility Assay Dataset (CAMAD). WHAD comprises time-lapse phase-contrast images of wound healing assays using genetically modified MCF10A and MCF7 cells, while CAMAD includes MDA-MB-231 and RAW264.7 cells cultured on various substrates. These datasets offer diverse experimental conditions, comprehensive annotations, and high-quality imaging data, addressing gaps in existing resources.

- Categories: