Signing in the Wild dataset

- Citation Author(s):

- Submitted by:

- Mark Borg

- Last updated:

- DOI:

- 10.21227/w24f-yh32

- Data Format:

- Links:

761 views

761 views

- Categories:

- Keywords:

Abstract

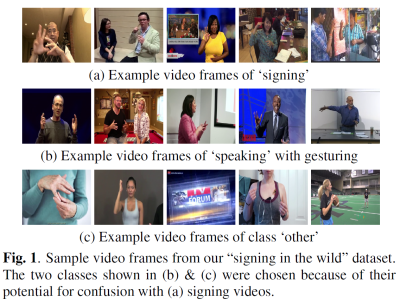

Our Signing in the Wild dataset consists of various videos harvested from YouTube containing people signing in various sign languages and doing so in diverse settings, environments, under complex signer and camera motion, and even group signing. This dataset is intended to be used for sign language detection.

For the negative set, we created two classes of videos, labelled ‘speaking’ and ‘other’. Our motivation for the ‘speaking’ class is that speech is often accompanied by hand gestures (gesticulation), which can be easily confused with signing. Signing can be discriminated by its linguistic nature, i.e., its distinct phonological, morphological and categorical (discrete) structures, while gesticulation tends to be more spontaneous, idiosyncratic and analogue in nature.

For the ‘other’ class, we looked for distractors to both ‘signing’ and ‘speaking’, i.e., videos containing hand movements that are quite similar to signing/gesticulation and thus might confuse a classifier. Examples include: miming, hand exercises, various manual activities like playing instruments, painting, writing, yoga and martial arts, sports like table tennis, etc. Also included are activities similar to speech, like people laughing, clapping, nodding, listening to other speakers, etc.

A total of 1120 videos are included in our dataset, each video contributing the first 6.6 minutes, resulting in 2000 frames per video when sampled at 5Hz. We have 1.45 million video frames in total. Our videos are untrimmed, i.e., a video can contain multiple activities, background scenes, scene cuts, and other actions done by the same or different actors. Thus the videos are unconstrained both spatially and also temporally. This is in line with recent trends in video action recognition [8], and unlike ASLR datasets where trimmed videos are the norm. In particular, several videos in our dataset contain all 3 classes (occasionally with temporal overlap), and sometimes the same person alternating between signing and speaking.

We performed manual groundtruthing at video frame level. Since action boundaries can be inherently fuzzy, we consider a short temporal context (10 frames) surrounding the frame to be labelled in order to decide on its class label. We also adopt certain spatial guidelines, e.g. mouth movements must be visible for action ‘speaking’, thus eliminating distant views and when the speaker turns his/her back to the camera. Ambiguous cases are left unlabelled. We annotate video segments that do not contain signing or speaking as ‘other’, including opening/closing credits, title screens, scene transitions, animations, background scenes, etc.

If you find this dataset useful, please cite the following paper:

Mark Borg, Kenneth P. Camilleri, "Sign Language Detection "In The Wild" With Recurrent Neural Networks", ICASSP 2019.

Any comments, suggestions, feedback are welcome: mborg2005 <at> gmail <dot> com

Instructions:

Please read the additional files and visit the page links for further information about this dataset.

The groundtruth can be found in "groundtruth.txt", a space-delimited file, where each row consists of the video ID (youtube identifier), the frame number, and the class label.

Class labels can be:

S = signing

P = speaking (with gesticulation)

n = other (any other manual activity, and non-speaking or non-signing activities)

? = unlabelled or ambiguous frame

The file "candidate_video_urls.txt" contains the full list of YouTube videos that matched the search queries. Please note that this list has not yet been filtered and contains the raw search query results returned by the YouTube API. Some of the videos may contain inappropriate content and their inclusion in this file is performed automatically and they have not yet been manually vetted.

From the candidate_video_urls list, we manually checked and vetted a subset of the videos. These can be found in "Signing_in_the_Wild_video_urls.txt". This is the official list of videos that constitutes this dataset, and the videos recommended for use.

Due to copyright issues, we can not include the YouTube videos here. So you would need to download the videos yourself. We provide Python scripts in our code repository to allow you to download the videos given in "Signing_in_the_Wild_video_urls.txt" (Please see the download_youtube_video_list.py script in https://github.com/mark-borg/sign-language-detection). Alternatively, you could use any freely available download tool, such as youtube-dl.exe (available from http://rg3.github.io/youtube-dl).

We also provide intermediate files that we generated while processing the videos, in case they are useful. For example, frame-difference images of the videos, optical-flow processed images of the videos, motion history images, and the trained neural network models (we used Keras and python) are provided. The intermediate processed video frames are provided as JPEGs or as Numpy files, while the network models are available as H5 files. Further detail of the processing performed on this dataset can be found in our paper.

Dataset Files

- The video list returned by the search queries (not manually vetted) (Size: 3.24 MB)

- The list of videos that have been manually checked and vetted. These constitute the official videos of this dataset (Size: 49.65 KB)

- The groundtruth data (Size: 27.48 MB)

- Trained neural network on frame difference images (Keras model) (Size: 43.01 MB)

- Trained neural network on optical flow data (Keras model) (Size: 43.01 MB)

- Trained neural network on motion history images (MHI) (Keras model) (Size: 43.01 MB)

- Trained neural network on the raw RGB video frames (Keras model) (Size: 43.01 MB)