Dataset Entries from this Author

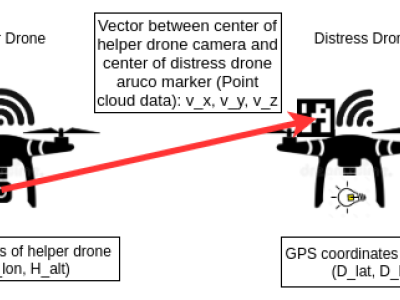

We design a solution to achieve coordinated localization between two unmanned aerial vehicles (UAVs) using radio and camera perception. We achieve the localization between the UAVs in the context of solving the problem of UAV Global Positioning System (GPS) failure or its unavailability. Our approach allows one UAV with a functional GPS unit to coordinate the localization of another UAV with a compromised or missing GPS system. Our solution for localization uses a sensor fusion and coordinated wireless communication approach.

- Categories:

Open Access Entries from this Author

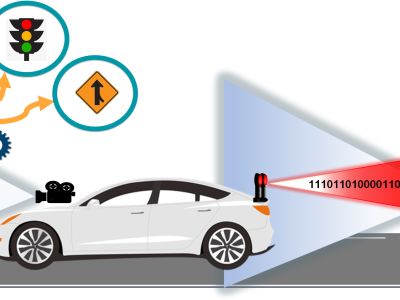

This dataset was used in our work "See-through a Vehicle: Augmenting Road Safety Information using Visual Perception and Camera Communication in Vehicles" published in the IEEE Transactions on Vehicular Technology (TVT). In this work, we present the design, implementation and evaluation of non-line-of-sight (NLOS) perception to achieve a virtual see-through functionality for road vehicles.

- Categories: