YawDD: Yawning Detection Dataset

- Citation Author(s):

-

Shabnam AbtahiMona OmidyeganehBehnoosh Hariri

- Submitted by:

- Shervin Shirmohammadi

- Last updated:

- DOI:

- 10.21227/e1qm-hb90

- Data Format:

- Links:

40852 views

40852 views

- Categories:

- Keywords:

Abstract

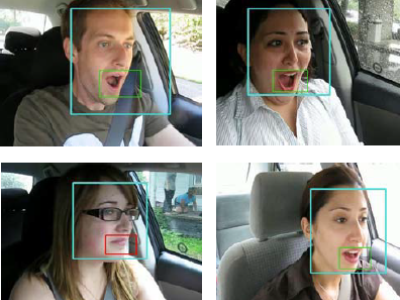

A dataset of videos, recorded by an in-car camera, of drivers in an actual car with various facial characteristics (male and female, with and without glasses/sunglasses, different ethnicities) talking, singing, being silent, and yawning. It can be used primarily to develop and test algorithms and models for yawning detection, but also recognition and tracking of face and mouth. The videos are taken in natural and varying illumination conditions. The videos come in two sets, as described next:

In the first set, the camera is installed under the front mirror of the car. This set provides 322 videos, each for a different situation: 1- normal driving (no talking), 2- talking or singing while driving, and 3- yawning while driving. Each subject has 3 or 4 videos.

In the second set, the camera is installed on the driver’s dash. This set provides 29 videos, one for each subject, and each video containing all of driving silently, driving while talking, and driving while yawning.

Instructions:

You can use all videos for research. Also, you can display the screenshots of some (not all) videos in your own publications. Please check the Allow Researchers to use picture in their paper column in the table to see if you can use a screenshot of a particular video or not. If for a particular video that column is “no”, you are NOT allowed to display pictures from that specific video in your own publications.

The videos are unlabeled, since it is very easy to see the yawning sequences. For more details, please see:

S. Abtahi, M. Omidyeganeh, S. Shirmohammadi, and B. Hariri, “YawDD: A Yawning Detection Dataset”, Proc. ACM Multimedia Systems, Singapore, March 19 -21 2014, pp. 24-28. DOI: 10.1145/2557642.2563678

In reply to no comment by ruilin cai

In reply to HI, would somebody tell me by Huang Bingyu