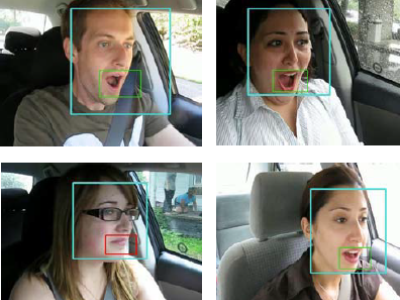

This dataset contains facial expressions from different sides. the top-level videos are shot on Logitech c270 and the bottom ones are shot with an LG g6. The videos are continuous shots at 480p from different angles.

This is meant to serve as a dataset for facial expression recognition under different angles and poses.

- Categories: