GSET Somi: A Game-Specific Eye Tracking Dataset for Somi

- Citation Author(s):

-

Saman Zad TootaghajSajad Mowlaei

- Submitted by:

- Shervin Shirmohammadi

- Last updated:

- DOI:

- 10.21227/bvt7-3b15

- Data Format:

- Links:

1087 views

1087 views

- Categories:

- Keywords:

Abstract

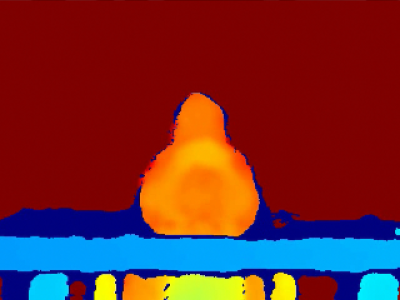

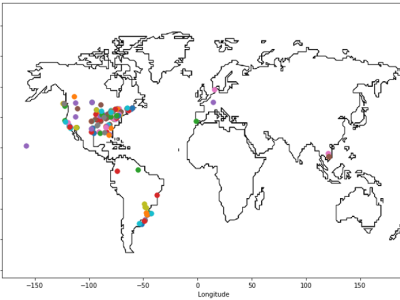

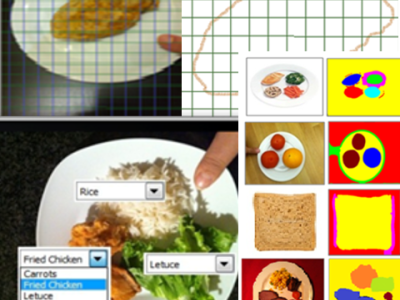

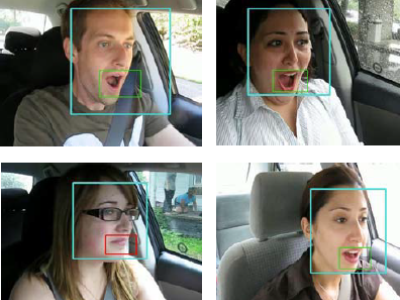

This is an eye tracking dataset of 84 computer game players who played the side-scrolling cloud game Somi. The game was streamed in the form of video from the cloud to the player. The dataset consists of 135 raw videos (YUV) at 720p and 30 fps with eye tracking data for both eyes (left and right). Male and female players were asked to play the game in front of a remote eye-tracking device. For each player, we recorded gaze points, video frames of the gameplay, and mouse and keyboard commands. For each video frame, a list of its game objects with their locations and sizes was also recorded. This data, synchronized with eye-tracking data, allows one to calculate the amount of attention that each object or group of objects draw from each player. This dataset can be used for designing and testing game-specific visual attention models.

Instructions:

- AVI offset represents the frame from which data gathering has been started.

- The 1st frame of each YUV file is the 901st frame of its corresponding AVI file.

- For detailed info and instructions, please see:

Hamed Ahmadi, Saman Zad Tootaghaj, Sajad Mowlaei, Mahmoud Reza Hashemi, and Shervin Shirmohammadi, “GSET Somi: A Game-Specific Eye Tracking Dataset for Somi”, Proc. ACM Multimedia Systems, Klagenfurt am Wörthersee, Austria, May 10-13 2016, 6 pages. DOI: 10.1145/2910017.2910616