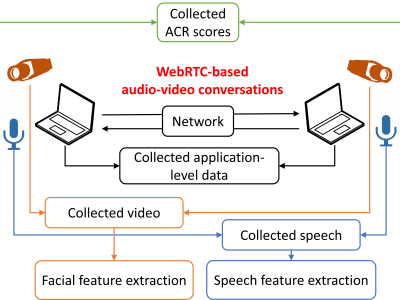

In the realm of real-time communications, WebRTC-based multimedia applications are increasingly prevalent as these can be smoothly integrated within Web browsing sessions. The browsing experience is then significantly improved concerning scenarios where browser add-ons and/or plug-ins are used; still, the end user's Quality of Experience (QoE) in WebRTC sessions may be affected by network impairments, such as delays and losses.

- Categories: