Open-set Occluded Person Identification with mmWave Radar

- Citation Author(s):

- Submitted by:

- Tao Wang

- Last updated:

- DOI:

- 10.21227/vkx6-fy49

- Data Format:

449 views

449 views

- Categories:

- Keywords:

Abstract

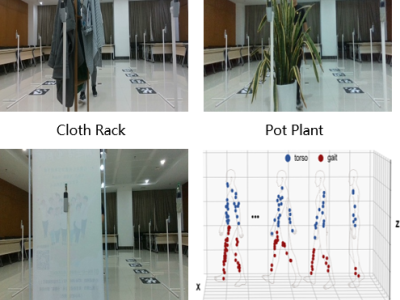

We introduce a novel multi-modal dataset comprising point cloud data from a mmWave radar, RGB and depth images from an RGB-D camera, collected from 23 human subjects. We use the TI IWR6843ISK-ODS radar for transmitting and receiving radar signals, paired with an Intel RealSense D435 camera for capturing RGB and depth images at the resolution of 420 × 240. Considering the diverse electromagnetic wave absorption and reflection properties of different materials, our experiment employs three types of obstacles: a clothing rack, a poster board and a pot plant. Additionally, to broaden the scope of scenarios and comprehensively evaluate radar sensor performance, walking data also is collected using the same experimental configuration without obstacles. We employ the cell-averaging constant false-alarm rate (CA-CFAR) algorithm and the maximum-energy time-frequency ridge extraction method to produce radar point clouds. In total, the dataset comprises over 600,000 radar point cloud frames derived from both signal processing algorithms and more than 600,000 RGB and depth images.

Instructions:

The dataset's file structure is depicted as follows:

Contained within the mmWaveRadar.zip are mmWave radar point clouds generated using the CA-CFAR and the maximum energy time-frequency ridge algorithm. We configured three distinct false alarm rates (0.02, 0.05, and 0.08). Each false alarm rate encompasses four individual scenario file folders: "Empty," "Poster," "Plant," and "Cloth Rack." Within each scenario folder, point clouds from 22 or 23 individuals are stored as '.txt' files. Each '.txt' file details 3D coordinates and velocity information for each point in a radar point cloud frame. Frames are demarcated by empty lines.

RGBImages and RGBDData comprise paired RGB images and depth images synchronized with the mmWave radar. Within each folder of RGBImages or RGBDImages, four subfolders denote distinct scenarios. Within each scenario folder, there are 23 or 22 subfolders representing different individuals, each containing 7,200 images.