Monocular 3D Point Cloud Reconstruction Dataset (Mono3DPCL)

- Citation Author(s):

-

AmirHossein Zamani (Concordia University)

- Submitted by:

- AmirHossein Zamani

- Last updated:

- DOI:

- 10.21227/d9ft-0n41

702 views

702 views

- Categories:

- Keywords:

Abstract

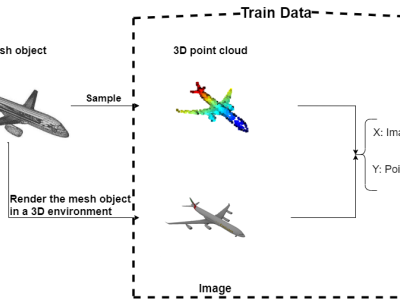

As with most AI methods, a 3D deep neural network needs to be trained to properly interpret its input data. More specifically, training a network for monocular 3D point cloud reconstruction requires a large set of recognized high-quality data which can be challenging to obtain. Hence, this dataset contains the image of a known object alongside its corresponding 3D point cloud representation. To collect a large number of categorized 3D objects, we use the ShapeNetCore (https://shapenet.org) dataset. It is a densely annotated subset of the ShapeNet dataset comprising 55 common object categories with 51,300 unique synthetic 3D models. These 3D models, however, were in mesh format and needed to be converted to the ground truth 3D point cloud. To convert these 3D mesh models to point clouds and to capture a single image from them, we used the Open3D library (https://www.open3d.org). The objects were positioned at the center of the scene and an image of each object was exported using a fixed-view camera for all objects. This way, we collectively prepared ~16000 data pairs in 8 groups of datasets in categories of the airplane, bottle, car, bench, sofa, cellphone, bike, and speaker. We dedicated a subdivision of the data in each category to the training (85%), validation (5%), and test (10%) phases.

Instructions:

The dataset contains 8 folders, presenting 8 data categories with the names of the airplane, bottle, car, bench, sofa, cellphone, bike, and speaker. In each category folder, there are two "Test" and "Train" folders in which data correspond to that specific data category. Note that in each subfolder inside the "Train" and "Test" folders, there are three types of data: (i) the 3D point cloud object in the ".ply" format, (ii) the RGB image in the ".jpg" format, and (iii) a text file containing the 3D coordinates (x, y, z) each point in the corresponding 3D point cloud object in that particular subfolder. One can write a simple Python script to load these data to be used in a software program.

(Please note that we had difficulties when uploading the dataset as one zip file on the IEEE DataPort's website. That is why the dataset zip file is divided into several zip files to be able to properly upload on the website. So, it is expected that the user downloads all the zip files and then organizes the dataset folders as described above.)

We appreciate your interest in our research. If you would like to use our work, please consider the proper citation format mentioned at the beginning of this page.