e-FLASH

- Citation Author(s):

- Submitted by:

- Jerry Gu

- Last updated:

- DOI:

- 10.21227/qz96-yh44

- Links:

457 views

457 views

- Categories:

- Keywords:

Abstract

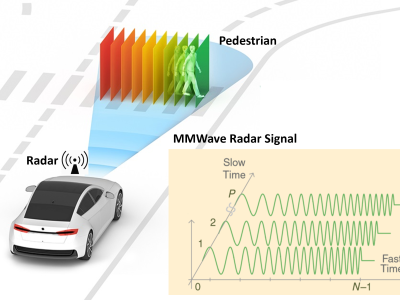

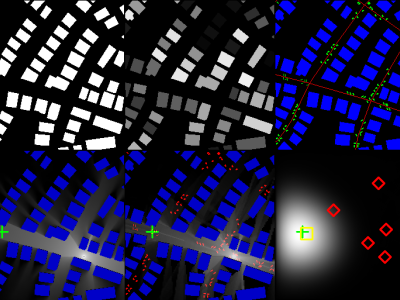

The increasing availability of multimodal data holds many promises for developments in millimeter-wave (mmWave) multiple-antenna systems by harnessing the potential for enhanced situational awareness. Specifically, inclusion of non-RF modalities to complement RF-only data in communications-related decisions like beam selection may speed up decision making in situations where an exhaustive search, spanning all candidate options, is required by the standard. However, to accelerate research in this topic, there is a need to collect real-world datasets in a principled manner. This article presents an experimentally obtained dataset, composed of 23 GB of data, which aids in beam selection in vehicle-to-everything mmWave bands, with the goal of facilitating machine learning (ML) in the wireless communication required for autonomous driving. Beyond this specific example, the article describes methodologies of creating such datasets that use time synchronized and heterogeneous types of LiDAR, GPS, and camera images, paired with the RF ground truth data of selected beams in the mmWave band. While we use beam selection as the primary demonstrator, we also discuss how multimodal datasets may be used in other ML-based PHY-layer optimization areas, such as beamforming and localization.

Instructions:

This is the extended FLASH (Federated Learning for Automated Selection of High-band mmWave Sectors), or e-FLASH dataset, in its processed form. The dataset is organized by category, scenario, and episode, in a hierarchical format with the rosbag .bag, camera-to-image name mappings, and synchronized data and sector labels for each sample also available. A complete description of the dataset, as well as the descriptions for all FLASH-related datasets, is available at https://genesys-lab.org/multimodal-fusion-nextg-v2x-communications. Please send any questions to gu.je@northeastern.edu.