Datasets

Standard Dataset

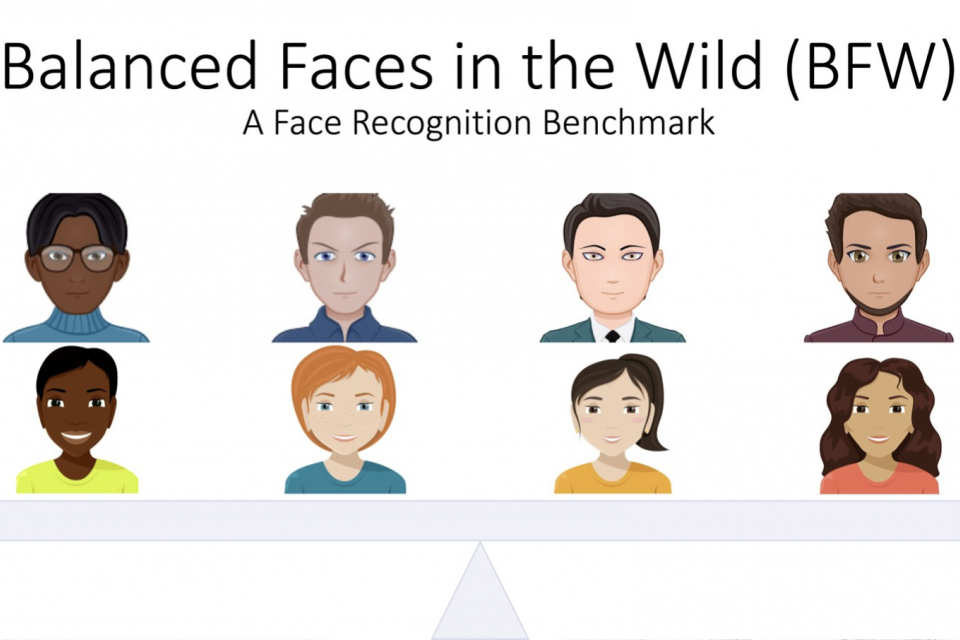

Balanced Faces in the Wild

- Citation Author(s):

- Submitted by:

- Joseph Robinson

- Last updated:

- Tue, 10/11/2022 - 12:38

- DOI:

- 10.21227/nmsj-df12

- Data Format:

- Research Article Link:

- Links:

- License:

1933 Views

1933 Views- Categories:

- Keywords:

Abstract

This project investigates bias in automatic facial recognition (FR). Specifically, subjects are grouped into predefined subgroups based on gender, ethnicity, and age. We propose a novel image collection called Balanced Faces in the Wild (BFW), which is balanced across eight subgroups (i.e., 800 face images of 100 subjects, each with 25 face samples). Thus, along with the name (i.e., identification) labels and task protocols (e.g., list of pairs for face verification, pre-packaged data-table with additional metadata and labels, etc.), BFW groups into ethnicities (i.e., Asian (A), Black (B), Indian (I), and White (W)) and genders (i.e., Females (F) and Males (M)). Thus, the motivation and intent are that BFW will provide a proxy to characterize FR systems with demographic-specific analysis now possible. For instance, various confusion metrics and the predefined criteria (i.e., score threshold) are fundamental when characterizing performance ratings of FR systems. The following visualization summarizes the confusion metrics in a way that relates to the different measurements.

Contents of the compressed folders are as follows:

`features/`

`face-samples/`

Face images organized in directories with the following structure: `<ethnicity>_<gender>/<subject id>/<face file>.jpg`

BFW faces were encoded and organized by the name of the model used. Included are features extracted using keras-vggface repo. Specifically, the features extracted with this were

VGG16

ResNet-50

SENet-50

meta/

bfw-[version]-datatable.[pkl,csv]

List of pairs with corresponding tags for class labels (1/0), along with subgroup, gender, and ethnicity labels. All metadata needed for experiments can be found in this Python3 pickle file and CSV file, with distinction in file extension.

bfw-mtcnn-face-detections.pkl

Python3 pickle file containing face fiducial and BB information. NOTE that the faces in face-samples are pre-cropped, not aligned. This way preferred alignment methods can be applied. Please do reach out for discussions on how to best align.

Data Structure

Paired faces and all corresponding metadata is organized as a pandas dataframe (ie.., bfw-[version]-datatable.[pkl,csv]) which is formatted as follows.

IDfoldp1p2labelid1id2att1att2vgg16resnet50senet50a1a2g1g2e1e2sphereface

01asian_females/n000009/0010_01.jpgasian_females/n000009/0043_01.jpg100asian_femalesasian_females0.8200.7030.679AFAFFFAA0.393

11asian_females/n000009/0010_01.jpgasian_females/n000009/0120_01.jpg100asian_femalesasian_females0.7190.5240.594AFAFFFAA0.354

21asian_females/n000009/0010_01.jpgasian_females/n000009/0122_02.jpg100asian_femalesasian_females0.7320.5280.644AFAFFFAA0.302

31asian_females/n000009/0010_01.jpgasian_females/n000009/0188_01.jpg100asian_femalesasian_females0.6070.3480.459AFAFFFAA-0.009

41asian_females/n000009/0010_01.jpgasian_females/n000009/0205_01.jpg100asian_femalesasian_females0.6290.3840.495AFAFFFAA0.133

ID : index (i.e., row number) of dataframe ([0, N], where N is pair count).

fold : fold number of five-fold experiment [1, 5].

p1 and p2 : relative image path of face

label : ground-truth ([0, 1] for non-match and match, respectively)

id1 and id2 : subject ID for faces in pair ([0, M], where M is number of unique subjects)

att1 and att2 : attributee of subjects in pair.

vgg16, resnet50, senet50, and sphereface : cosine similarity score for respective model.

a1 and a2 : abbreviated attribute tag of subjects in pair [AF, AM, BF, BM, IF, IM, WF, WM].

g1 and g2 : abbreviated gender tag of subjects in pair [F, M].

e1 and e2 : abbreviate ethnicity tag of subjects in pair [A, B, I, W].

[1] Robinson, Joseph P., Gennady Livitz, Yann Henon, Can Qin, Yun Fu, and Samson Timoner. "Face recognition: too bias, or not too bias?" In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, pp. 0-1. 2020.

[2] Robinson, Joseph P., Can Qin, Yann Henon, Samson Timoner, and Yun Fu. "Balancing Biases and Preserving Privacy on Balanced Faces in the Wild." In CoRR arXiv:2103.09118, (2021).

Documentation

| Attachment | Size |

|---|---|

| 6.21 KB |