CATARACTS

- Citation Author(s):

-

Hassan ALHAJJMathieu LamardPierre-henri ConzeBéatrice CochenerGwenolé Quellec

- Submitted by:

- Hassan ALHAJJ

- Last updated:

- DOI:

- 10.21227/ac97-8m18

- Data Format:

- Links:

12038 views

12038 views

- Categories:

- Keywords:

Abstract

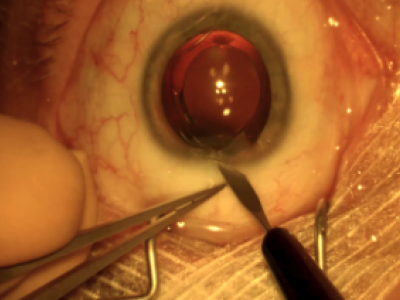

A fundamental building block of any computer-assisted interventions (CAI) is the ability to automatically understand what the surgeons are performing throughout the surgery. In other words, recognizing the surgical activities being performed or the tools being used by the surgeon can be deemed as an essential steps toward CAI. The main motivation for these tasks is to design efficient solutions for surgical workflow analysis. The CATARACTS dataset was proposed in this context. This dataset consists of 50 cataract surgery. It was annotated for two main tasks: surgical tool presence detection and surgical activity recognition. It was divided into two sets (train, test) for the surgical tool presence detection task and 3 sets (train, dev, test) for the activity recognition task. This dataset was used in three different: CATARACTS2017, CATARACTS2018 and CATARACTS2020. The ground truth of the test sets of these challenges are now released so the community can use it to benchmark algorithms for sugical workflow analysis.

Instructions:

The dataset consists of 50 videos of cataract surgeries performed in Brest University Hospital. Patients were 61 years old on average (minimum: 23,maximum: 83,standard deviation: 10). Each surgery was recorded in two videos: the microscope video and the surgical tray video. The frame definition was 1920x1080 pixels (full HD resolution) for both types of videos. The frame rate was approximately 30 frames per second for the tool-tissue interaction videos and 50 frames per second for the surgical tray videos. Microscope videos had a duration of 10 minutes and 56 s on average (minimum: 6 minutes 23 s, maximum: 40 minutes 34 s, standard deviation:6 minutes 5 s). Surgical tray videos had a duration of 11 minutes and 3 s on average (minimum: 6 minutes 30 s, maximum: 40 minutes 48 s, standard deviation: 6 minutes 3 s). In total, more than nine hours of surgery (for each video type) have been video recorded. For more details about the dataset and the different tasks proposed, please refer to the links provided in the abstract.

Please note that the evaluation scripts (for the microscope test set) used in the challenges are available now. For CATARACTS 2018, in addition to the videos, we provide the images (images.zip) used in the challenge and the ground truth.

If you use this dataset, please cite the following paper:

Al Hajj, Hassan, et al. "CATARACTS: Challenge on automatic tool annotation for cataRACT surgery." Medical image analysis 52 (2019): 24-41.

In reply to for research only by Rong Tao