MPSC_MV

- Citation Author(s):

-

Masud Ahmed (University Of Maryland Baltimore County)Zahid Hasan (University Of Maryland Baltimore County)

- Submitted by:

- Masud Ahmed

- Last updated:

- DOI:

- 10.21227/khwg-kj52

234 views

234 views

- Categories:

Abstract

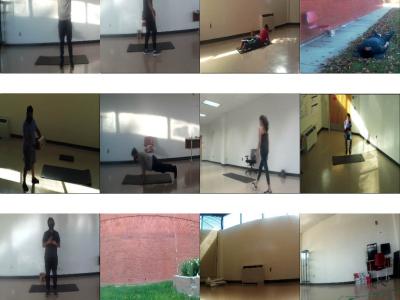

Deep video representation learning has recently attained state-of-the-art performance in video action recognition. However, when used with video clips from varied perspectives, the performance of these models degrades significantly. Existing VAR models frequently simultaneously contain both view information and action attributes, making it difficult to learn a view-invariant representation. Therefore, to study the attribute of multiview representation, we collected a large-scale time synchronous multiview video dataset from 10 subjects in both indoor and outdoor settings performing 10 different actions with three horizontal and vertical viewpoints using a smartphone, an action camera, and a drone camera. We provide the multiview video dataset with various meta-data information to facilitate further research for robust VAR systems.

Instructions:

This is a partial Dataset, we will upload the full dataset soon including data loader