Heidelberg Spiking Datasets

- Citation Author(s):

-

Benjamin CramerYannik Stradmann

- Submitted by:

- Friedemann Zenke

- Last updated:

- DOI:

- 10.21227/51gn-m114

- Data Format:

- Research Article Link:

- Links:

2815 views

2815 views

- Categories:

- Keywords:

Abstract

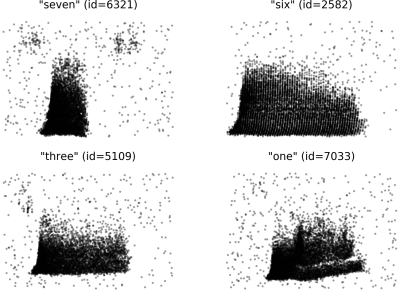

The Heidelberg Spiking Datasets comprise two spike-based classification datasets: The Spiking Heidelberg Digits (SHD) dataset and the Spiking Speech Command (SSC) dataset. The latter is derived from Pete Warden's Speech Commands dataset (https://arxiv.org/abs/1804.03209), whereas the former is based on a spoken digit dataset recorded in-house and included in this repository. Both datasets were generated by applying a detailed inner ear model to audio recordings. We distribute the input spikes and target labels in HDF5 format. SHD as well as SSC are released under the Creative Commons Attribution 4.0 International License.

Instructions:

We provide two distinct classification datasets for spiking neural networks. | Name | Classes | Samples (train/valid/test) | Parent dataset | URL | | ---- | ------- | ------ | ------------------------- | --- | | SHD | 20 | 8332/-/2088 | Heidelberg Digits (HD) | https://compneuro.net/datasets/hd_audio.tar.gz | | SSC | 35 | 75466/9981/20382 | Speech Commands v0.2 | https://arxiv.org/abs/1804.03209 | Both datasets are based on respective audio datasets. Spikes in 700 input channels were generated using an artificial cochlea model. The SHD consists of approximately 10000 high-quality aligned studio recordings of spoken digits from 0 to 9 in both German and English language. Recordings exist of 12 distinct speakers two of which are only present in the test set. The SSC is based on the Speech Commands release by Google which consists of utterances recorded from a larger number of speakers under less controlled conditions. It contains 35 word categories from a larger number of speakers.

Dataset Files

- Heidelberg Digits audio files (Size: 321.44 MB)

- Spiking Heidelberg Digits test set (Size: 36.37 MB)

- Spiking Heidelberg Digits training set (Size: 124.78 MB)

- Spiking Speech Commands training set (Size: 1.11 GB)

- Spiking Speech Commands validation set (Size: 148.34 MB)

- Spiking Speech Commands test set (Size: 308.34 MB)