A case base of eXplainable Artificial Intelligence of the Things (XAIoT) systems

- Citation Author(s):

-

Humberto Parejas-Llanovarced (Universidad Complutense de Madrid)Jesús Darias (Universidad Complutense de Madrid)

- Submitted by:

- Juan Recio-Garcia

- Last updated:

- DOI:

- 10.21227/4nb2-q910

- Data Format:

334 views

334 views

- Categories:

- Keywords:

Abstract

The increasing complexity of intelligent systems in the Internet of Things (IoT) domain makes it essential to explain their behavior and decision-making processes to users. However, selecting an appropriate explanation method for a particular intelligent system in this domain can be challenging, given the diverse range of available XAI (eXplainable Artificial Intelligence) methods and the heterogeneity of IoT applications. This dataset is a case base elicited from an exhaustive literature review on existing explanation solutions of AIoT (Artificial Intelligence of the Things) systems.

Instructions:

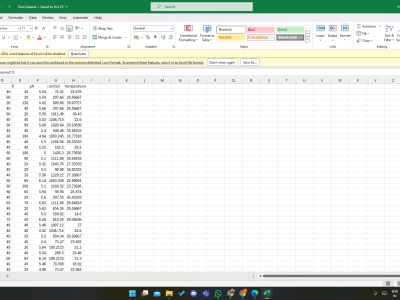

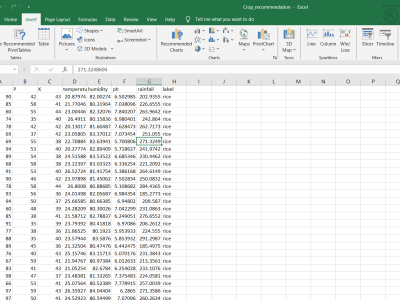

Standard CSV file.

Domains: Aviation, Energy Management, Environment, Healthcare, Industry, Security, Smart Agriculture, and Transportation

AI Model: Case Base Reasoning (CBR), Ensemble Model (EM), Fuzzy Model (FM), Neuro-Fuzzy Model (NFM), Neuronal Network (NN), Nearest Neighbours Model (NNN), Tree-Based Model (TB), Unsorted Model (UM)

AI Task: Anomaly detection, Assistance, Automated maneuvering, Autonomous processes and robotics, Business Management, Cyber attacks detection, Decision support, Facial recognition, Image processing, Process quality improvement., Internet of Behaviour, Intrusion detection, Modelling, Predictive maintenance, Recommendation, and Risk prediction

AI Problem: classification or regression

XAI method Concurrentness: ante-hoc or post-hoc

XAI method Scope: local or global

XAI method Portability: model-specific or model-agnostic

XAI method: ANFIS, FDE, LIME, SHAP, t-SNE, Integrated Gradients, OC-Tree, Ada-WHIPS, ALMMo-0*, ApparentFlow-net, CGP, CTree, Grad-CAM, KSL, LORE, Ontological Perturbation, RBIA, RetainVis, RPART, xDNN, Concept Attribution, Encoder-Decoder, HihO, SIDU, CIT2FS, iNNvestigate, SALIENCY (XAI-CBIR), CART, Feature Importance, FFT, J48, Prescience, DIFFI, GSX, Saliency Map, Decision Tree, Deep-SHAP, ELI5, XAI360*, CAM, TRUST, QMC, RuleFit, PDP, Attention Maps, RISE, XRAI.

XAI technique: Activation Clusters, Architecture Modification, Composite, Data-drive, Feature Relevance, Filter, Knowledge Extraction, Optimisation Based, Probabilistic, Simplification, and Statistics