A Subject Data of ARKitFace Dataset

- Citation Author(s):

-

Yueying KaoBowen PanMiao XuJiangjing LyuXiangyu ZhuYuanzhang ChangXiaobo LiZhen Lei

- Submitted by:

- Miao Xu

- Last updated:

- DOI:

- 10.21227/jfbk-0j17

777 views

777 views

- Categories:

- Keywords:

Abstract

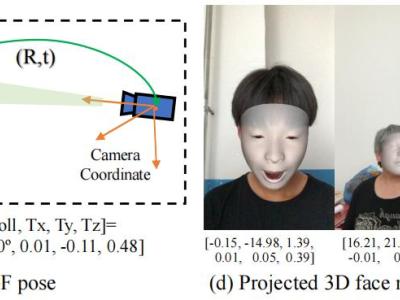

The ARKitFace dataset is established for training and evaluating both 3D face shape and 6DoF in the setting of perspective projection. A total of 500 volunteers, aged 9 to 60, are invited to record the dataset. They sit in a random environment, and the 3D acquisition equipment is fixed in front of them, with a distance ranging from about 0.3m to 0.9m. Each subject is asked to perform 33 specific expressions with two head movements (from looking left to looking right / from looking up to looking down). 3D acquisition equipment we used is an iPhone 11. The shape and location of human face are tracked by structured light sensor. The triangle mesh and 6DoF information of the RGB images are obtained by built-in ARKit toolbox. The triangle mesh is made up of 1,220 vertices and 2,304 triangles. In total, 902,724 2D facial images (resolution 1280 X 720 or 1440 X 1280) with ground-truth 3D mesh and 6DoF pose annotation are collected. All the 500 subjects consent to use their data. We will release all the subjects with 2D facial images, 3D mesh and 6DoF pose annotation under the authorization of all the subjects. We will not release their personal privacy information, including age, gender etc. We uploaded the data of a subject to IEEE DataPort as an example.

Instructions:

Please read README.md

TRANSLATE with