Learned Stereo

- Citation Author(s):

-

Jeremy MaKrishna ShankarMark TjerslandKevin StoneMax Bajracharya

- Submitted by:

- Jeremy Ma

- Last updated:

- DOI:

- 10.21227/s1gy-3v36

- Research Article Link:

- Links:

146 views

146 views

- Categories:

- Keywords:

Abstract

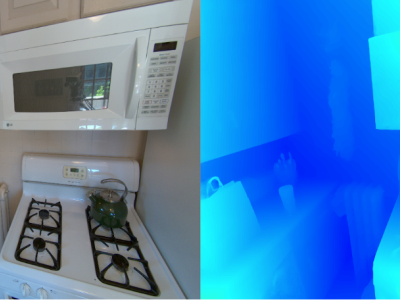

We present a passive stereo depth system that produces dense and accurate point clouds optimized for human environments, including dark, textureless, thin, reflective and specular surfaces and objects, at 2560x2048 resolution, with 384 disparities, in 30 ms. The system consists of an algorithm combining learned stereo matching with engineered filtering, a training and data-mixing methodology, and a sensor hardware design. Our architecture is 15x faster than approaches that perform similarly on the Middlebury and Flying Things Stereo Benchmarks. To effectively supervise the training of this model, we combine real data labelled using off-the-shelf depth sensors, as well as a number of different rendered, simulated labeled datasets. We demonstrate the efficacy of our system by presenting a large number of qualitative results in the form of depth maps and point-clouds, experiments validating the metric accuracy of our system and comparisons to other sensors on challenging objects and scenes. We also show the competitiveness of our algorithm compared to state-of-the-art learned models using the Middlebury and FlyingThings datasets.

Instructions:

Please find included README file for further instructions.